Why Does AI-Generated Content Fail Without Verification?

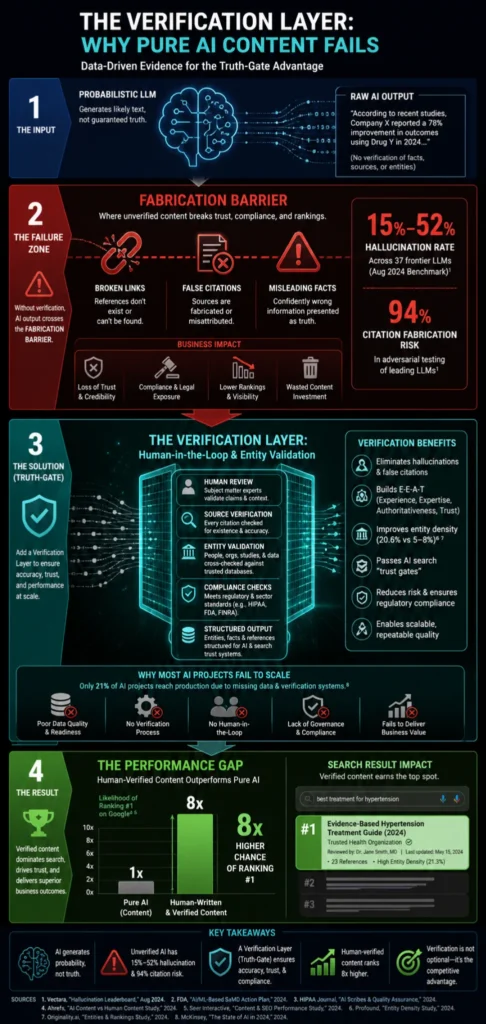

AI-generated content fails not because the models write poorly — it fails because most organizations deploy AI without a system to verify what the AI produces before it reaches the public. “A 2026 benchmark across 37 models reported hallucination rates between 15% and 52%” — SQ Magazine.

For multi-location operators publishing 20-50+ articles per month, those failure rates aren’t an abstract research concern — they’re an operational liability that compounds across every location page, every published claim, and every citation.

Content Ops Lab built its verification infrastructure inside a 12-location regulated healthcare organization, delivering 1,000+ citation-verified articles over 23 months with zero compliance violations.

Related: AI Content Risks for Multi-Location Businesses – What Operators Need to Know

Why Does AI-Generated Content Fail at the Architecture Level?

AI-generated content fails at the architectural level because the models producing it are designed to predict linguistically plausible sequences—not to verify factual accuracy. The output sounds authoritative regardless of whether it’s true.

That’s not a software bug awaiting a patch. It’s the structural reality of how large language models are built, and no amount of prompt engineering eliminates it.

The Probabilistic Design Problem

Every LLM is a next-token predictor. The model selects each word based on statistical patterns in its training data, prioritizing what sounds most likely — not what is most accurate.

- Training rewards linguistic plausibility, not factual truth

- Models have no external database to verify claims against

- Hallucination is a permanent feature of transformer architecture, not a version-specific bug

- When generating from internal knowledge, hallucination rates can exceed 50%

The architecture problem is why “better prompting” produces diminishing returns — the underlying probabilistic mechanism doesn’t change.

Confident Fabrication as a Feature

The most dangerous failure mode isn’t obvious errors — it’s confident fabrication. Research from MIT, cited by SuprMind AI, found that “models were 34% more likely to use phrases like ‘definitely,’ ‘certainly,’ and ‘without doubt’ when generating incorrect information” — SuprMind AI.

Out of 40 frontier models tested in late 2025, 36 were more likely to give a confidently wrong answer than to admit uncertainty.

- High-confidence language signals credibility to human readers

- Reviewers are less likely to fact-check content that sounds certain

- Compliance reviewers can’t detect what they’re not looking for

- Content that sounds authoritative gets published — fabrications included

Confident fabrication is why surface-level editorial review fails as a quality control system.

The Reasoning Tax Paradox

More sophisticated reasoning models don’t solve the hallucination problem — they can make it worse. Research cited in SuprMind AI’s 2026 Hallucination Research Report found that complex “reasoner” models performed worse on factual tasks than their faster counterparts, with all tested reasoning models exceeding 10% hallucination rates on enterprise-length benchmarks — SuprMind AI.

- Chain-of-thought prompting increases hallucination risk by up to 12% in complex domains

- Each reasoning step creates a new opportunity for probabilistic drift

- Models are rewarded for guessing correctly during training, not for acknowledging uncertainty

Operators who assume premium model tiers eliminate verification risk are working from a false premise.

What Makes Hallucinated Citations Dangerous at Production Scale?

Citation hallucination is the most operationally damaging failure mode for multi-location publishers. A fabricated statistic in a single article is an editorial problem. The same fabricated statistic deployed across 50 location pages is a compliance crisis — and one that’s difficult to audit after publication because the errors are designed to look real.

The Phantom Reference Epidemic

Hallucinated citations don’t read like obvious errors — they feature realistic author names, established journal titles, and plausible publication years. An analysis of over 4,000 research papers accepted at NeurIPS 2025 uncovered more than 100 AI-hallucinated citations across at least 53 papers that had already passed peer review — arXiv:2602.15871v1.

- Fabricated citations pass basic visual inspection by human reviewers

- Hallucinated URLs generate 404 errors only when someone clicks them

- In regulated industries, a single fabricated medical citation is a compliance trigger

- Post-publication auditing at scale requires systematic tools, not manual spot-checks

If fabrications clear peer review at premier machine learning conferences, they clear standard editorial review at most content agencies.

Citation Duplication and Recursive Fabrication

Citation hallucination compounds in ways that aren’t immediately visible. “Hallucinated citations are not random inventions but patterned recombinations of real authors, journals, dates, and keywords, with duplication occurring in nearly 30% of cases” — arXiv:2604.16407.

When AI models ingest previously AI-generated content, they absorb fabricated sources and reproduce them — creating a recursive loop that makes bad citations look legitimate through repetition.

- A single fabricated source can propagate through dozens of derivative articles

- Repeated citation of a phantom reference makes it appear credible in AI retrieval systems

- Scale amplifies the problem: 50 articles/month means 50 potential fabrication vectors

The recursive fabrication problem is particularly dangerous for multi-location operators whose content volumes create large surface areas that compound errors.

Failure Rates by Knowledge Domain

Citation hallucination isn’t uniformly distributed across content types. Research from the CheckIfExist study (2026) found that hallucinated URL rates range from 3% to 13%, with 5–18% of URLs across domains either non-resolving or representing link rot — arXiv:2604.03173v1.

- Legal content: 18.7% non-resolving URL rate, 14.5% hallucinated URLs

- Science content: 16.9% non-resolving, 12.1% hallucinated

- Deep research agents showed higher hallucination rates than standard search-augmented LLMs

For operators in healthcare, legal, and financial services, these error rates aren’t acceptable—they’re disqualifying.

What Compliance and Regulatory Exposure Does Unverified AI Content Create?

Unverified AI content creates direct compliance and regulatory exposure in healthcare, legal, and financial sectors — and the regulatory posture is hardening. The question is no longer whether AI-generated content will be scrutinized; it’s whether your organization has a verification system that can withstand that scrutiny.

FDA Enforcement and the cGMP Precedent

In April 2026, the FDA issued its first landmark Warning Letter to a drug manufacturer for improper reliance on AI in manufacturing documentation. “FDA cautioned the company that AI-generated outputs or recommendations must undergo review and approval by an authorized Quality Unit (QU) representative” — DLA Piper.

- Compliance responsibility cannot be delegated to AI tools

- Human-in-the-loop oversight is now an explicit regulatory requirement

- Failure to incorporate review and approval constitutes a direct cGMP violation

- The FDA ruling signals a shift from viewing AI as an assistant to viewing it as a regulated system component

Healthcare operators who haven’t built human verification into their AI content workflows are already behind the compliance curve.

FTC “Operation AI Comply”

The FTC targeted Rytr LLC under “Operation AI Comply” for generating consumer reviews with fabricated material information — the tool’s ability to produce plausible content outpaced its ability to ensure that the content was truthful — DLA Piper.

- Plausibility is not the same as truthfulness — regulators understand the difference

- AI content that fabricates unsupported claims creates deceptive conduct exposure

- Enforcement is targeting the organizations deploying AI, not just the tools

- Due diligence on AI content outputs is becoming a baseline legal expectation

Marketing teams that approve AI-generated content without verification are accepting a deceptive conduct risk they may not have priced in.

State-Level AI Legislation

California’s Transparency in Frontier AI Act (SB 53) mandates risk frameworks and safety reporting for large-scale AI deployments. Penalties for non-compliant AI use reach up to $1 million per violation in multiple jurisdictions.

- State-by-state compliance creates multi-jurisdictional exposure for multi-location operators

- Risk framework requirements apply to organizations deploying AI, not just vendors building it

- Compliance infrastructure built for one state may not satisfy another

Multi-location operators managing content across state lines face a compounding compliance surface area that unverified AI content can’t safely navigate.

If your operation needs to produce 20-50+ articles per month without sacrificing compliance or quality, Content Ops Lab builds the infrastructure to make that possible. Contact us to discuss your content production requirements.

Why Does Unverified AI Content Fail to Get Cited by AI Search Systems?

Unverified AI content fails AI citation selection because the systems performing the citing apply their own verification logic. AI search platforms don’t reward well-written content — they reward content that meets extractability, corroboration, and authority thresholds that generic AI generation can’t satisfy.

The Extractability Filter

AI retrieval systems fragment content into semantic units and evaluate each independently for citation-readiness. “AI Overviews are built to only surface information that is backed up by top web results, and include links to web content that supports the information presented in the overview” — Google (Official Documentation).

Content that buries its answer in the third paragraph fails the extractability filter before any other evaluation criteria apply.

- Answer-led structure is a prerequisite, not a nice-to-have

- Narrative flow that delays the key point disqualifies the content from AI citation

- Generic AI writing follows a build-up → reveal structure that fails extraction

- Verified content paired with structured formatting passes the extractability filter; generic AI content doesn’t

The format requirements for AI citation are systematic. Unverified AI content routinely fails them because it’s generated for readability rather than extractability.

E-E-A-T as an Algorithmic Barrier

Google’s AI systems now enforce E-E-A-T as an algorithmic filter, not just a guideline for human reviewers. For YMYL queries — healthcare, legal, financial — Google maintains an elevated threshold requiring supporting information from reliable, trustworthy sources with verified author credentials.

- Entity density in cited content averages 20.6% vs. 5-8% in standard text

- Author schema, HTTPS security, and structured data matching are prerequisites for citation eligibility

- AI content without proprietary data or first-person expertise offers no information gain over existing indexed content

E-E-A-T isn’t a content quality rubric that better writing can satisfy — it’s an algorithmic filter requiring structural and technical compliance.

Entity Density and Information Gain

Google’s February 2026 core update explicitly increased the weighting of information gain as a ranking signal — rewarding original analysis and penalizing content that reorganizes what’s already indexed.

- Heavily cited content carries 20.6% entity density vs. 5-8% in generic AI output

- Information gain requires original data, first-person experience, or proprietary analysis

- Aggregated summaries don’t generate positive information gain signals

AI search citation is competitive. Organizations deploying verified, entity-rich content are accumulating a citation footprint while competitors still haven’t updated their production model.

Related: Content at Scale – Why Volume Without Verification Fails in AI Search

What Does the Performance Gap Between AI Content and Verified Content Actually Look Like?

The performance gap between generic AI content and verified, structured content is measurable across search rankings, citation frequency, and conversion rates. The data doesn’t suggest verification is an optional quality enhancement — it shows unverified AI content underperforms across every channel that matters.

The 8x Search Ranking Disadvantage

A Semrush study of 42,000 blog posts found that “human-written content was 8x more likely to rank #1 on Google than purely AI-generated pages” — Search Engine Land (citing Semrush). AI content frequently appears in the lower half of page one but rarely secures the featured snippet or top position for competitive queries.

- 72% of SEO professionals believe AI content performs as well as human content

- Actual ranking data shows AI content consistently underperforms at the top of the results

- Only 19% of SEO teams report that AI improves content quality, despite 70% using it for speed

The 8x disadvantage is a lead generation problem, not just an SEO metric.

Multi-Location Scaling Failure and Data Deserts

AI search engines measure “data confidence” — prioritizing sources that are complete, consistent, and frequently updated across all locations. When AI generates local content without a verification layer, inconsistencies accumulate, and AI confidence in the brand’s data drops.

- Inconsistent hours, addresses, or service details in smaller markets create location-level invisibility in AI search

- Brand voice drift occurs when different prompts or models are used across locations

- Near-duplicate content across location URLs increases crawl inefficiency and delays indexation

- McKinsey research indicates that only 21% of AI projects reach production scale with measurable returns

Data deserts — locations with fragmented, unverified content — are invisible to AI search systems precisely when those systems are driving the highest-converting traffic.

Why Verified Content Converts Differently

Verified, structured content gets cited by AI platforms because it meets their standards. When it does, the users it delivers are pre-qualified — they’ve completed an AI research process that selected this source as trustworthy.

- AI search traffic converted at 21.4% average vs. 3.32% site average across an 8-month production period — a 6.4x performance multiplier — in a 12-location regulated healthcare operation

- ChatGPT referral traffic peaked at 40% CVR in January 2026 for the same production environment

- Verified content earns citation; cited content delivers pre-qualified users; pre-qualified users convert at higher rates

The trust chain from verification to citation to conversion is systematic — it can be built, and it compounds over time.

How Do Multi-Location Operators Build a Verification Layer That Scales?

Building a verification layer that scales requires treating content production as a system architecture problem rather than a content quality problem. The verification gap isn’t closed by hiring better writers or upgrading AI tools — it’s closed by implementing the infrastructure that sits between AI generation and publication.

Research-First vs. Generation-First Workflows

The failure mode most operators are running into is generation-first: prompt the AI, get the output, do a light editorial pass, and publish. Research-first workflows invert that sequence — verified source material is assembled before generation begins, so the model works from a validated foundation rather than internal probabilistic memory.

- Perplexity Pro or equivalent research workflows produce citation-tracked source documents before any generation occurs

- AI generation constrained to verified source material produces dramatically lower hallucination rates

- Source documents create the audit trail that compliance review requires

Generation-first is faster at the start; research-first is defensible at scale.

Human-in-the-Loop Requirements

The FDA’s April 2026 ruling established human-in-the-loop oversight as an explicit compliance requirement for AI-generated content in regulated industries — DLA Piper. HITL isn’t a regulatory checkbox — it’s the mechanism that catches what automated systems miss.

- HITL verification at the citation level catches fabricated sources before publication

- Human review of claim-to-source mapping is the last line of defense against confident fabrication

- At 50 articles per month, HITL must be systematized — individual reviewer judgment without a protocol doesn’t scale

Operators who believe AI content can be verified at publication speed by a single editor are describing a system that will fail under production load.

Infrastructure vs. Tool Selection

The verification gap isn’t solved by switching AI tools. A different model with a lower hallucination rate still requires the same verification infrastructure — research-first workflows, citation cross-checking, human review, and compliance-stage quality control.

- The five failure modes each require a different infrastructure component to address

- Global business losses from AI hallucinations reached $67.4 billion in 2024 alone — SuprMind AI — that’s a systems problem, not a vendor problem

- Multi-location operators need a single unified production system across all locations, not a different tool per content type

The operators who close the verification gap first gain a compounding advantage — their citation footprint grows while competitors are still optimizing prompts.

How Content Ops Lab Builds Content Infrastructure That Eliminates the Verification Gap

A 12-location regulated healthcare organization used the Content Ops Lab production system to deliver 1,000+ citation-verified articles over 23 months — zero compliance violations, 45% of all leads from organic search, and AI search traffic converting at 21.4% average against a 3.32% site baseline. The system was iterated through live production at 50+ articles per month until every verification failure mode had been addressed.

- 23-month production test inside a 12-location regulated healthcare organization

- 1,000+ citation-verified articles and pages delivered with zero compliance violations

- 45% of all leads from organic search — outperforming paid search nearly 2:1

- AI search converting at 21.4% average vs. 3.32% site baseline — 6.4x performance multiplier

- 5x production scale: 10 articles/month to 50+ without adding headcount

- 653% impression growth and 1,700% click growth for an emerging brand over 14 months

- 887% ChatGPT traffic growth in 7 months (July 2025 – February 2026)

- Dual-brand methodology validated on both mature brand maintenance and emerging brand growth

The Content Ops Lab Production System

Every engagement runs through the same four-stage infrastructure — the difference between a tool and a system is that the system produces consistent results regardless of who’s running it.

- Research: Verified sources assembled before generation — no AI writing from memory

- Verification: Citations cross-checked line by line, STAT vs CLAIM labeled, audit trail documented

- Optimization: Built simultaneously for Google, ChatGPT, Perplexity, Claude, and Gemini

- Delivery: WordPress-staged or Google Docs packaged — publish-ready, compliant, Grammarly-reviewed

The verification layer is what separates content that ranks and converts from content that compounds your compliance exposure.

Ready to build a content infrastructure that scales without the compliance risk? Get in touch today — we’ll assess your current content operation and outline what a systematic approach would look like for your organization.

FAQs About Why AI-Generated Content Fails

Can’t we just manually fact-check AI-generated content after it’s generated?

Manual post-generation fact-checking doesn’t scale at 20-50+ articles per month, and it doesn’t address confident fabrication errors that look identical to accurate content. Effective verification requires a research-first workflow where verified source material is assembled before generation begins. Post-publication review catches some errors; research-first workflows prevent most of them.

How long does it take to build a verification-first content system that scales?

A full System Build runs 12 weeks from discovery through training, with 90 days of post-launch support. Done-For-You engagements are operational within the first production cycle — research, citation verification, and quality control infrastructure are already in place. The more relevant question is how long unverified AI content will compound compliance exposure before the system gets built.

How does citation verification protect healthcare and legal practices from regulatory exposure?

Citation verification creates an audit trail linking every published claim back to its source document, including exact quote extraction and line number documentation. The April 2026 FDA Warning Letter established that human review of AI-generated outputs is a regulatory requirement — citation verification is the mechanism that makes that review possible at scale.

How is a verification-first content system different from what a traditional content agency delivers?

Traditional agencies use AI to generate content at volume — reviewing for tone and grammar, not citation accuracy. Verification-first systems assemble source material before generation, cross-check every claim against source documents, and maintain an audit trail for compliance review. The outcome: verified content meets extractability thresholds for AI search, complies with regulated industry standards, and produces a citation footprint that compounds.

Does Content Ops Lab’s Done-For-You model include citation verification, or is that only in the System Build?

Citation verification is a core component of both service models. In Done-For-You engagements, every article goes through the full Research → Verification → Optimization → Delivery workflow — verified citations, line-number documentation, STAT vs CLAIM labeling, and compliance-stage quality control are production standards, not add-ons. System Build engagements transfer that same infrastructure to your team with the training needed to run it independently.

Key Takeaways

- AI-generated content fails because LLMs are probabilistic text predictors, not fact-verification systems — hallucination is architectural, and no prompt engineering eliminates it

- Confident fabrication is the most dangerous failure mode: models use definitive language most frequently when generating incorrect information, making errors indistinguishable from accurate content without a research-first verification workflow

- Citation hallucination rates range from 3–13% for URLs alone, and fabricated references duplicate in nearly 30% of cases, compounding compliance exposure at production scale

- The FDA and FTC have established that human-in-the-loop oversight of AI-generated outputs is a regulatory requirement in regulated industries, not a best practice

- AI search systems select content based on extractability, entity density, and corroboration standards that generic AI content can’t meet — verified, structured content is the prerequisite for AI citation

- A 12-location regulated healthcare operation using verification-first production achieved 21.4% AI search CVR against a 3.32% site baseline over 8 months — a 6.4x performance multiplier that compounds as the citation footprint grows

Why AI-Generated Content Fails: Systems Solve What Tools Can’t

The evidence points in one direction: AI-generated content fails because organizations deploy generation capability without verification infrastructure. “Human-written content was 8x more likely to rank #1 on Google than purely AI-generated pages”— Search Engine Land (citing Semrush) — but the meaningful comparison isn’t human vs. AI. It’s verified vs. unverified.

Operators who build a research-first, citation-verified production system capture the efficiency of AI generation without absorbing the compliance and citation failure risks that come with unverified deployment. The first-mover window on AI search citation is still open. The operators who build verification infrastructure are now accumulating a citation footprint that compounds — and their competitors, still running generation-first workflows, are not.

Content Ops Lab built that system inside a regulated healthcare operation over 23 months — 1,000+ articles, zero compliance violations, and an AI search converting at 6.4x the site baseline.