AI Content Risks for Multi-Location Businesses: What Operators Need to Know

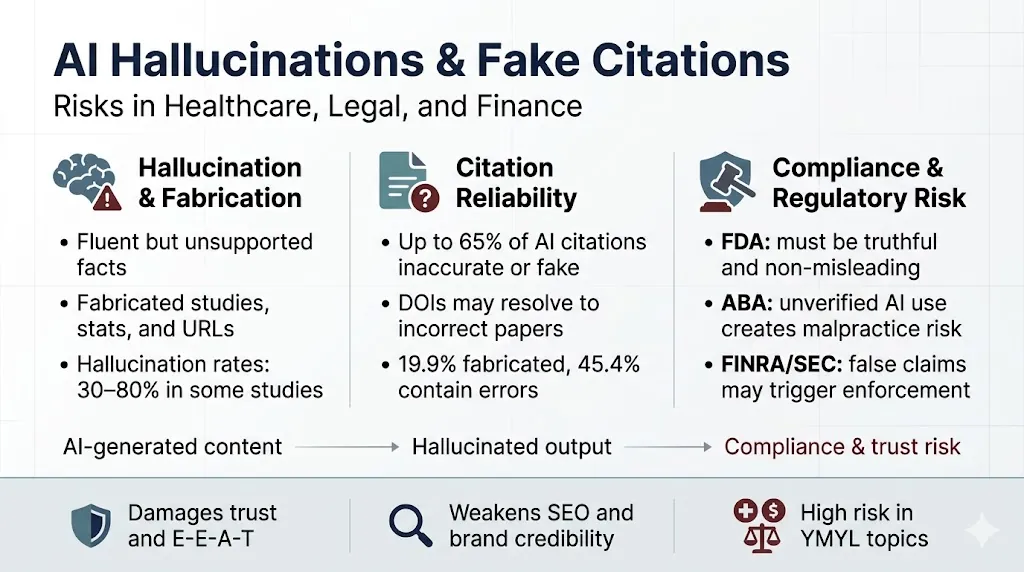

AI content risks for multi-location businesses are most dangerous not when they’re obvious — but when they’re invisible. Fabricated citations look like real ones. Hallucinated statistics read as credibly as verified ones. A JMIR study evaluating GPT‑3.5, GPT‑4, and Bard across 471 references found hallucination rates of 39.6%, 28.6%, and 91.4%, respectively, and precision as low as 0% for some models.

Understanding AI content risks before scaling production is the difference between a compliance program and a compliance liability. Content Ops Lab built its citation verification infrastructure inside a 12-location regulated healthcare organization — 1,000+ articles and pages delivered with zero compliance violations over 23 months. The difference wasn’t the AI tools. It was the verification layer that those tools ran through before anything reached publication.

Related: Why Generic Content Fails in AI Search Even If It Ranks in Google

What AI Content Risks Are Multi-Location Operators Actually Exposed To?

AI content risks at scale cluster around three predictable failure points: statistical fabrication, regulatory exposure, and reputational damage that compounds across locations before anyone catches it.

The Fabrication Problem at Scale

Most multi-location operators encounter hallucination as an abstract concern until the first audit finds a nonexistent statistic.

- AI models generate plausible-sounding citations that can’t be located in any database

- Fabricated URLs mimic real source formatting, making errors non-trivial to detect

- Statistical hallucinations often appear more confident than verified data

- A single bad stat in a template can propagate across dozens of location pages

The fabrication problem appears consistently across leading commercial systems at measurable rates — not just in obscure models or edge-case prompts.

Compliance Exposure Across Locations

Regulated industries face a compounding risk: the same unverified claim published across 10 or 15 locations creates 10 or 15 compliance events, not one.

- Healthcare content requires accurate benefit and risk representation under FDA standards

- Legal content carries attorney liability for unverified citations used in client work

- Financial content must meet FINRA and SEC standards for factual accuracy

- A single unverified claim flagged in one market becomes a network-wide audit trigger

The compliance surface area grows with your location count. So does the exposure.

Reputational Risk vs. Legal Risk

These are related but distinct concerns, and operators often focus on the wrong one first.

- Legal risk is episodic — triggered by a specific audit, court filing, or regulator review

- Reputational risk is continuous — eroding trust across patients, clients, and referral networks

- AI-generated health misinformation spreads in an authoritative tone, accelerating trust erosion

- Google’s ranking systems prioritize helpful, reliable content — unverified AI content creates SEO exposure alongside compliance exposure

Both risk categories share the same root cause: AI writing from memory without a verification layer.

What AI Content Risks Does Citation Accuracy Research Actually Show?

“More than 30% of the citations provided by the GPT‑3.5 version do not exist, and this rate is only slightly reduced for the GPT‑4 version.” That finding from The American Economist isn’t a warning about early AI limitations — it’s a description of what most agency AI workflows are producing right now.

Generic AI Tools and the Verification Gap

Most AI content tools are built for generation speed rather than citation accuracy.

- Generic AI tools write from internal model parameters, not verified external sources

- No cross-check mechanism exists between the generated text and the source documents

- Citation formatting is convincing regardless of whether the source actually exists

- Fabrication rates are model- and task-dependent — harder prompts produce higher error rates

The tools themselves aren’t the problem. The absence of a verification layer around them is.

What Agency AI Workflows Miss

Traditional content agencies that have added AI to their process face a structural limitation: their workflows were built for volume, not verification.

- Agencies optimize for article delivery speed, not citation accuracy

- One-draft delivery models have no systematic quality diagnostic step

- No STAT vs. CLAIM labeling means different evidence types receive identical (insufficient) scrutiny

- Template-driven production can’t integrate client-specific compliance standards

Adding AI to a volume-first workflow doesn’t fix the verification problem — it accelerates it.

The Citation Reliability Data

The research on citation accuracy is sufficiently consistent across studies to serve as a baseline assumption for any VP of Marketing approving an AI content program.

- A 2025 JMIR Mental Health study found 19.9% of GPT‑4o citations were entirely fabricated

- Across six independent investigations, roughly 51% of 732 LLM-generated citations were fabricated

- Newer models reduce fabrication rates but don’t eliminate them — GPT‑4 still produced ~18% fabricated citations vs. ~55% for GPT‑3.5

- Fabricated references mimic real citation structure but cannot be found in bibliographic databases

These aren’t failure rates that disappear at scale. They compound.

What Does a Compliant AI Content Workflow Actually Require?

The FDA’s standard for prescription drug promotion states that content “must be truthful and non-misleading,” must accurately communicate information without omitting material facts, and must not misrepresent data from studies. That standard doesn’t care whether a statistic was invented by a human writer or an AI model.

Research-First Generation

The most important decision in a compliant AI workflow happens before the AI writes a single word: establishing what verified source material the generation is anchored to.

- Retrieval-augmented generation (RAG) frameworks reduce hallucinations by grounding outputs in retrieved documents

- A 2025 study found RAG with reliable source material significantly reduced hallucinations vs. standalone GPT models and Google search

- Research must be completed and verified before generation begins — not after

- Source libraries need to be topic-specific, credible, and systematically maintained

AI writing from verified research is categorically different from AI writing from memory.

Citation Cross-Checking Protocol

A research-first approach reduces hallucination risk. A citation cross-check protocol catches what slips through.

- Every statistic requires exact quote extraction against the source material

- Line numbers document the audit trail for every claim — not summaries, not paraphrases

- STAT vs. CLAIM labeling applies different verification standards to different evidence types

- No citation is considered verified until independently located and confirmed in the source

This step is manual by design. Automated detection tools triage suspect spans but cannot guarantee factual correctness — they focus human review, not replace it.

Human Governance and Sign-Off Structure

The ABA’s Formal Opinion 512 states that “lawyers’ uncritical reliance on content created by a GAI tool” is risky and “almost certainly malpractice.” The same logic applies to healthcare marketing, financial services content, and any regulated consumer-facing material.

- Human review and approval must be mandatory for all AI-assisted outputs before publication

- Sign-off structure should be role-specific — compliance review is separate from editorial review

- Operators should maintain records of AI use that can be produced in the event of an audit

Governance isn’t optional infrastructure. In regulated industries, it’s the difference between defensible content and exposed content.

If your operation needs to produce 20-50+ articles per month without creating compliance liability, Content Ops Lab builds the infrastructure to make that possible. Contact us today to discuss your content production requirements.

How Do Hallucination Risks Differ Across Healthcare, Legal, and Finance?

The failure modes are consistent across industries — AI fabricates citations, invents statistics, and presents unsupported claims in authoritative language. The regulatory consequences vary significantly by vertical.

Healthcare Compliance Standards

Healthcare content sits at the intersection of FDA marketing standards, clinical accuracy requirements, and patient safety considerations.

- FDA standards require that promotional communications be truthful, balanced, and not misrepresent study data

- A Mass General Brigham study found ChatGPT’s accuracy at approximately 72% overall — roughly one in four outputs contained errors

- Nine LLMs, including ChatGPT, accepted medical misinformation in 32% of prompts in a recent multi-model evaluation

- Unverified AI-generated health content spreads incorrect information in an authoritative tone, eroding trust in formal medical systems

For multi-practice operators, the compliance surface extends across every location page, service description, and condition explainer published.

Legal Practice Liability

The Mata v. Avianca case documents the costs of AI citation fabrication in a legal context: a $5,000 court-imposed sanction, a judicial opinion describing AI-generated case summaries as “gibberish,” and public documentation of an attorney’s failure to verify authorities independently.

- ABA Formal Opinion 512 ties AI use to duties of competence, candor, and supervision

- Independent verification of AI-assisted research and citations is required before use in client work or court filings

- Rule 11 obligations apply regardless of the tool used to generate content

- Multi-office firms face network-wide exposure when a fabrication standard isn’t enforced uniformly

The bar associations have responded to the documented consequences. The verification standard is no longer optional.

Financial Communications Regulation

FINRA Rule 2210 and the SEC’s Marketing Rule require that financial communications be fair, balanced, and not misleading — with a reasonable basis required to substantiate all material statements.

- Fabricated performance statistics or mischaracterized risks in AI-generated content directly violate these standards

- SEC enforcement focus on marketing practices creates active regulatory risk for unverified AI-generated claims

- The “reasonable basis” requirement means the verification standard must exist before publication — not after a complaint is filed

The compliance requirements across all three sectors converge on the same operational need: a systematic verification layer that runs before anything reaches a client or a regulator.

Related: How AI Search Engines Decide Which Sources to Cite

What Does Verified AI Content Production Look Like in Practice?

A 2025 JMIR Mental Health study found that 19.9% of GPT‑4o citations were entirely fabricated. Among citations that appeared real, 45.4% contained bibliographic errors — meaning nearly two-thirds of all citations were either fake or inaccurate. At 50 articles per month, that’s a systematic liability requiring a systematic fix.

STAT vs. CLAIM Labeling

Not all citations carry the same verification burden. A labeling system that distinguishes between them allocates verification resources appropriately.

- STAT labels apply to citations containing numerical data — hallucination risk is highest here

- CLAIM labels apply to sourced statements without numbers — verification focuses on source existence and accuracy of representation

- Both label types require an independent cross-check before use

- Labeling creates an auditable record of verification decisions, not just verification completion

Line-Number Audit Trails

Paraphrased citations introduce interpretation error. Exact quote extraction with line numbers eliminates that layer.

- Every citation requires word-for-word extraction from source material, not summaries

- Line numbers create a direct, auditable link between the published claim and the source document

- Exact quotes prevent the “faithful paraphrase” failure mode, where meaning shifts during restatement

- Audit trails support compliance review without requiring re-verification from scratch

Quality Thresholds That Hold at Scale

Verification infrastructure only works if the quality threshold is non-negotiable across every article, every month.

- Grammarly review at ≥95 score minimum — not a guideline, a gate

- Multi-draft quality diagnostic: if the first output shows fabrication or generic patterns, delete and restart

- Citation verification is completed before delivery — not flagged as a revision item

How Should Operators Evaluate Whether Their Current AI Content Workflow Is Compliant?

Building compliance infrastructure is a longer conversation. Identifying whether your current workflow has a verification problem can happen in an afternoon.

Red Flags in Existing Workflows

Most AI content compliance gaps are visible in the workflow structure before a single article is reviewed.

- No documented citation verification step between generation and publication

- AI tools write from memory rather than from pre-verified research documents

- No distinction between STAT citations and CLAIM citations in the review process

- Single-draft delivery with no quality diagnostic checkpoint

- No human sign-off protocol specific to regulated content types

If any of these describe your current workflow, the compliance exposure is structural — not article-by-article.

What Verification-Ready Infrastructure Includes

Verification-ready infrastructure is a production architecture built around verification from the start — not a checklist addition to an existing agency workflow.

- Research-first methodology: verified sources assembled before AI generation begins

- Citation cross-check protocol with line-number documentation

- STAT vs. CLAIM labeling with differentiated verification standards

- Human governance layer with defined sign-off roles and documented AI use policies

Building vs. Buying the System

Operators have two practical options: build verification infrastructure internally, or engage a partner who has already built and production-tested it.

- Building internally takes 3-6 months minimum from knowledge documentation through production scaling

- System Build engagements deliver complete infrastructure, templates, and training — operators own and operate the system after handoff

- Done-For-You engagements transfer full production responsibility — operators review deliverables, not workflows

The first-mover window for AI search citation dominance is measured in quarters. Compliance infrastructure that takes 6 months to build from scratch is 6 months of exposure — and 6 months of competitive ground ceded.

How Content Ops Lab Builds Compliant Content Infrastructure

Content Ops Lab’s citation verification system was built and production-tested within a 12-location regulated healthcare organization — not designed in theory and later validated. The results across a 23-month engagement set the compliance and performance standard that the methodology is held to on every client engagement.

- 23-month production test inside a regulated multi-location healthcare organization

- 1,000+ articles and pages delivered with zero compliance violations across the full engagement

- 45% of all leads generated from organic search — outperforming paid search nearly 2:1

- AI search converting at 21.4% average vs. 3.32% site baseline — a 6.4x performance multiplier

- 653% impression growth and 1,700% click growth for an emerging brand built from near-zero organic presence

- 5x production scale achieved — from 10 articles/month to 50+ without adding headcount

- 887% ChatGPT traffic growth in 7 months (July 2025 – February 2026)

- Dual-brand methodology validated on both mature brand maintenance and emerging brand growth

The Content Ops Lab Production System

Four sequential stages — each a prerequisite for the next.

- Research: Verified sources assembled before generation — no AI writing from memory, ever

- Verification: Line-by-line citation cross-check with STAT vs. CLAIM labeling and full audit trail

- Optimization: Multi-platform build for Google + ChatGPT + Perplexity + Claude + Gemini simultaneously

- Delivery: WordPress staging or Google Docs — publish-ready, Grammarly-reviewed, compliance-confirmed

The production system is the product. AI tools are components inside it.

Ready to build a content infrastructure that scales without the compliance risk? Get in touch — we’ll assess your current content operation and outline what a systematic approach would look like for your organization.

FAQs About AI Content Risks

What’s the difference between AI hallucination and citation fabrication?

Hallucination is the broader category — AI generating text that sounds accurate but isn’t grounded in facts. Citation fabrication is a specific type: AI-generated references that mimic real sources but can’t be found in any database. Both are problems in production workflows, but fabricated citations carry distinct compliance exposure because they create a false audit trail that’s harder to detect than a general factual error.

How does Content Ops Lab handle compliance requirements in healthcare and legal content?

Every article begins with pre-verified research — AI generation is anchored to source documents, not model memory. Citations are extracted as exact quotes with line numbers, labeled as STAT or CLAIM, and cross-checked before delivery. The system was built and tested inside a regulated healthcare environment — 1,000+ articles and pages with zero compliance violations over 23 months — and applies the same verification standards to legal and financial content.

What does citation verification actually involve in production?

Citation verification means independently locating every source, extracting exact quotes rather than paraphrases, documenting line numbers for audit purposes, and distinguishing between statistical claims and non-numerical sourced statements. It’s a manual step by design — automated tools help triage suspect content, but can’t guarantee accuracy. Verification happens before delivery, not as a post-publication review.

How is Content Ops Lab different from a content agency that also uses AI?

Most agencies add AI to a volume-first workflow. Content Ops Lab’s workflow is built around verification first, with AI generation as one stage in a multi-step production system. The structural difference: a quality diagnostic checkpoint that flags fabrication before it reaches the draft stage, STAT vs. CLAIM citation labeling, line-number audit trails, and a research-first methodology that grounds AI output in verified sources before writing begins.

Can AI content risks affect search rankings, not just regulatory compliance?

Yes. Google’s ranking systems prioritize content created to benefit people — not content created to manipulate rankings. Unverified AI content with fabricated statistics or unsupported claims can trigger quality signals that damage organic visibility. The compliance risk and the SEO risk share the same root cause: AI-generated content without a verification infrastructure to ensure accuracy before publication.

Key Takeaways

- AI hallucination and citation fabrication are production-scale risks — leading commercial models still fabricate citations at measurable rates even after significant model improvements

- Multi-location operators face compounding compliance exposure: a single fabricated statistic in a template can propagate across every location page simultaneously

- Healthcare, legal, and financial content each carries sector-specific regulatory consequences — FDA, ABA, FINRA, and SEC standards all apply to AI-generated claims

- Compliant AI content workflows require a research-first methodology, systematic citation cross-checking, STAT vs. CLAIM labeling, and a human governance layer — not just a human edit pass at the end

- Content Ops Lab’s citation verification system was production-tested across 23 months and 1,000+ articles in a regulated healthcare environment, with zero compliance violations at 50+ articles per month

- The first-mover window for AI search citation dominance is measured in quarters — operators building compliant infrastructure now capture compounding advantages before competitors enter the channel

Why AI Content Risks Require Systems, Not Shortcuts

A 2025 study on cancer information chatbots found that incorporating reliable source material into AI generation “significantly reduced the occurrence of hallucinations compared with using conventional GPT models or Google searches as reference sources.” The finding confirms what 23 months of production testing validated operationally: the solution to AI content risks isn’t better prompts or more careful editing — it’s a systematic research-and-verification architecture that grounds AI output in confirmed facts before generation begins.

For multi-location operators managing compliance obligations across regulated industries, the question isn’t whether to use AI for content production; it’s how. It’s about whether the infrastructure around that AI can produce content that’s defensible under scrutiny.

Content Ops Lab exists to build that infrastructure. The compliance record it runs on isn’t theoretical. It’s 23 months of production in the environment where these risks are highest.

Related: What Is Content Infrastructure for Multi-Location Brands?