AI Search Content Strategy for Multi-Location Businesses: What Actually Works

AI search content strategy for multi-location businesses isn’t a marketing refresh — it’s an infrastructure decision. “Zero-click search… grew 13 percentage points, from 56% to 69% in May 2025” — Search Engine Roundtable. AI systems now synthesize answers and surface two or three cited sources — not a page of links.

For multi-location operators in healthcare, legal, and home services, content volume is no longer the primary driver of performance. Being one of the sources AI systems cite is.

Content Ops Lab built its AI search content strategy for multi-location businesses inside a 12-location regulated healthcare organization — 1,000+ citation-verified articles across 23 months with zero compliance violations, producing AI search conversion rates of 20-32% against a 3.32% site baseline.

Related: The AI Citation Economy – Why Visibility Matters More Than Rankings

Why Is Your Current Content Strategy Failing in AI Search?

The content programs most multi-location operators are running were designed for a search environment that no longer exists. Traditional SEO rewarded volume, keyword density, and link acquisition. AI search rewards structured, answer-first content that retrieval layers can parse, chunk, and cite. Most operators haven’t made that transition — and their traffic data is starting to show it.

The Zero-Click Collapse

AI Overviews, ChatGPT Search, and Perplexity are answering questions inside the interface — and clicks to individual websites are falling. “We found that the presence of an AI Overview now correlates with a 58% lower average clickthrough rate for the top-ranking page” — Ahrefs.

- Ranking #1 no longer guarantees traffic volume

- AI answers satisfy informational intent before users click

- Click loss is sharpest for non-branded, high-volume informational queries

- Multi-location brands with thin service pages take disproportionate hits

This isn’t a Google algorithm update to wait out. It’s a structural shift in how users access information.

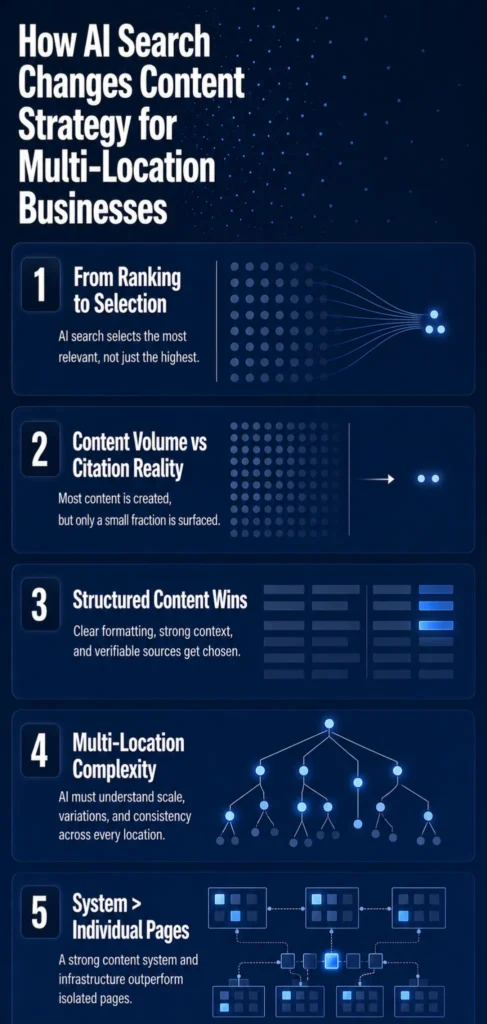

Ranking vs. Being Cited — Two Different Games

The old objective was ranking for many keywords across many pages. The new objective is becoming one of the three to five sources AI systems cite when constructing a high-value answer — and those two standards require different content architectures.

- Citation selection is highly concentrated — a small number of domains dominate

- AI systems prefer content that exposes clean, quotable answer blocks early

- Statistical backing with credible sources increases citation selection odds

- Generic thought leadership without structure rarely makes it into AI answers

Most current content programs optimize for the ranking game, while the citation game goes uncontested.

Why Volume-First Programs Miss the AI Era

More pages, more posts, more keywords — this model produces near-zero returns in AI search. Volume-first programs generate thin, near-duplicate content that AI retrieval layers actively deprioritize, and in regulated industries, that content carries hallucination and compliance risk without systematic verification.

- AI engines select from a compressed set of trusted, structured sources

- Near-duplicate location pages compete with each other and cancel out entity signals

- Ungoverned AI-generated content scores lowest in Google’s quality evaluation framework

- Production velocity without verification infrastructure creates compounding liability

The operators winning AI citations aren’t publishing more. They’re publishing better—and building the systems to do so consistently.

What Does AI Search Actually Reward in Multi-Location Content?

The pattern across millions of AI Overview citations, RAG retrieval studies, and platform documentation is consistent: AI search rewards content designed for extraction rather than consumption. Understanding that distinction is the starting point for any effective AI search content strategy for multi-location businesses.

Answer-First Formatting and Early Placement

“AI Overviews tend to cite answers that appear early in a page. In our analysis, 55% of citations came from the first 30% of content, while only 21% came from the bottom 40%” — CXL.

- Lead with a 40-60 word direct answer to the question the page targets

- Provide the core factual claim in the first two sentences

- Support with structured subsections and evidence that can be independently retrieved

- Long narrative intros and buried answers are citation dead zones

Placement isn’t about aesthetics — it’s about how retrieval layers operate. They pull chunks, not pages.

Structure, Chunking, and Retrieval Performance

AI systems retrieve discrete chunks of text aligned with specific queries and pass the most relevant ones into their answer-generation context. Pages with clear headings, tight topical segmentation, and discrete answer blocks produce high-precision chunks that accurately match queries.

- Use question-based H2 headings that mirror how users phrase queries

- Limit each content section to a single, well-scoped subtopic

- Use bullet lists and short paragraphs to create clean chunk boundaries

- Tables and structured comparisons are particularly extractable

Every service page, FAQ, and blog article needs a structural overhaul — not just a content refresh.

Why Near-Duplicate Location Pages Compound the Problem

Most multi-location brands have structurally identical location pages: the same template, a swapped city name, and minimal unique content. In AI search, this creates internal entity competition with no clear basis for AI to prefer one location, plus quality penalties from Google’s evaluation framework for scaled, low-effort templated content.

- Templated city pages trigger filtering in both Google organic and AI answer selection

- Near-duplicate content weakens topical authority signals across the entire domain

- Each location needs substantively differentiated content — unique services, local proof, distinct data

- Thin location coverage dilutes the entity coherence that AI systems use to resolve brand identity

The volume that felt like an asset in 2021 is now an active liability in AI search environments.

What Breaks First When Multi-Location Content Scales Without Systems?

Scaling content across 10, 15, or 20 locations without systematic infrastructure creates compounding structural problems that get harder to fix the longer production continues. For VPs of Marketing managing regulated industries, the failure modes are predictable.

Governance Failures at the Location Level

Without standardized schemas, review workflows, and verification rules, content drift is inevitable across a multi-location footprint. Different writers interpret brand voice differently, citation standards vary, and location-specific compliance requirements get missed.

- Policies in PDFs and approvals in email don’t scale across 10+ locations

- Copy-paste content between location templates creates drift and compliance gaps

- Inconsistent NAP data across location pages undermines both local SEO and AI entity signals

- No single source of truth means every revision requires tracking down the original intent

Content governance isn’t an administrative function — it’s a performance variable.

Hallucination Risk in YMYL Industries

When AI tools generate content without verified research inputs, hallucination is a statistical certainty at scale. Stanford HAI research found hallucination rates in legal content range from 69% to 88% for state-of-the-art language models – HAI Stanford.

Even specialized legal AI research tools hallucinate more than 17% of the time — and healthcare operators face identical exposure across dozens of location pages simultaneously.

- A single hallucinated statistic published across 12 location pages is 12 compliance violations

- AI systems in regulated industries must demonstrate evidentiary grounding to earn citations

- Citation verification requires a workflow — it can’t be retrofitted after production runs at scale

- Google’s quality evaluation framework explicitly identifies unsourced YMYL content as the lowest-rated

Verification infrastructure is a prerequisite for operating safely in regulated content environments.

The Compounding Cost of Thin Content at Scale

Thin content doesn’t just fail to perform — it actively degrades domain authority over time. BrightEdge’s 16-month analysis shows AI citation radii tightening, with engines growing more conservative about which domains they cite — BrightEdge.

- Content debt compounds — the longer volume-first production runs, the harder remediation becomes

- Weak pages in a topic cluster drag down citation selection odds for stronger pages

- AI systems reward domain-level topical coherence, not individual page count

- Remediation requires systematic audit, consolidation, and re-architecture — not just better new articles

The operators who delay building systematic infrastructure create problems that take longer to fix than it would have taken to build correctly from the start.

If your operation needs to produce 20-50+ articles per month without sacrificing compliance or quality, Content Ops Lab can build the infrastructure to make it possible. Contact us to discuss your content production requirements.

How Do Content Systems — Not Content Campaigns — Win AI Citations for Multi-Location Businesses?

The difference between a content campaign and a content system is the difference between one-time results and compounding authority. Campaigns produce articles. Systems produce an interconnected knowledge infrastructure that AI engines can consistently retrieve, chunk, and cite across multiple queries and platforms.

From Isolated Posts to Interconnected Knowledge Infrastructure

AI engines evaluate the topical coherence of an entire domain and the relationship between entities — organizations, locations, services, and conditions. A brand with 80 tightly interlinked articles organized into coherent topic clusters outperforms one with 200 disconnected posts.

- Service pages, condition pages, FAQs, and location pages must interlink systematically

- Internal linking tells AI retrieval layers which pages define your core expertise

- Topic clusters build domain-level authority that individual posts can’t achieve

- Structured content graphs make it easier for AI systems to reuse specific chunks as answers safely

“54.5% of AI Overview citations now rank organically (up from 32.3%)” — BrightEdge. Organic authority and AI citation authority are converging — building one now builds both.

Related: How AI Search Engines Decide Which Sources to Cite

Entity Signals, Schema, and NAP Consistency

For multi-location operators, AI and local search systems rely on entity signals to resolve brand identity and location relevance. Consistent NAP data, structured LocalBusiness schema, and Google Business Profile completeness are baseline requirements — without them, AI systems can’t confidently attribute content to specific locations.

- NAP inconsistency across locations weakens entity resolution in AI knowledge graphs

- LocalBusiness schema provides explicit, machine-readable location data

- Structured data helps Google understand page content and gather information about organizations and locations

- GBP completeness is a direct input for local relevance signals that AI answers pull from

Entity hygiene isn’t technical housekeeping — it’s the foundation of local AI visibility.

Citation Reinforcement and the Compounding Authority Loop

AI citation patterns show a compounding dynamic: frequently cited domains become more likely to be cited again. BrightEdge’s 16-month study shows overlap between AI citations and organic rankings has grown from 32.3% to 54.5% — exceeding 75% in regulated industries like healthcare — BrightEdge.

- AI engines increasingly lean on established sources rather than rotating to new ones

- Early citation presence reinforces perceived domain authority across subsequent queries

- The citation radius is tightening — AI systems are getting more conservative, not more exploratory

- Brands on 4+ platforms are 2.8x more likely to appear in ChatGPT responses

The operators who move first aren’t just winning today’s traffic; they’re winning tomorrow’s as well. They’re building a defensible position that compounds over time.

What Does a Verified Content System Actually Deliver at Scale?

Positioning AI search content strategy for multi-location businesses as a strategic investment requires production data, not projections. The following results come from a 23-month production engagement within a 12-location, regulated healthcare organization managing distinct service lines with full compliance requirements across the organization.

AI Search Conversion Performance vs. Organic Baseline

AI search traffic converts at fundamentally different rates than standard organic traffic — users arrive from AI platforms that pre-qualify them, further along in the decision journey. Across an 8-month measurement window, AI search sessions converted at an average of 21.4%, compared to a 3.32% site baseline — a 6.4x performance multiplier. ChatGPT referral traffic peaked at 40% CVR in January 2026, with a 7-month growth trajectory of 887%.

- AI search represents less than 0.3% of total traffic while delivering a disproportionate share of conversions

- Extended session durations confirm deeper engagement from AI-referred visitors

- The channel is still early-stage — conversion rates are high because competition for citations is low

- Pre-qualified intent is the structural driver — AI acts as a filter before users arrive

“A strong content governance strategy is the foundation of scalable, compliant content operations in the AI era” — Aprimo. Conversion performance without governance infrastructure can’t be sustained at scale.

The Dual-Brand Test — Mature vs. Emerging Growth

The same content infrastructure was applied simultaneously to a 12-location mature brand defending established search authority and a 3-location emerging brand building from near-zero organic presence. Both achieved 40-45% of total leads from organic search — outperforming paid search nearly 2:1.

- The emerging brand scaled 653% in impressions and 1,700% in clicks over 14 months

- The mature brand maintained 186,000 clicks and 27.4 million impressions across a 4-month snapshot

- 12,487 total leads generated in H2 2025, with organic as the dominant channel

- The same methodology works at both growth stages — infrastructure doesn’t require rebuilding per brand

Methodology flexibility matters for operators managing brands at different stages of market development.

Measurement: Beyond Rankings Into AI Visibility

Traditional SEO metrics — rankings, impressions, clicks — are now incomplete performance indicators. Standard GA4 reporting doesn’t break down impressions between classic web links and AI Overviews, leaving AI search contribution invisible in default dashboards.

- AI citation share of voice is a distinct metric from organic ranking position

- Tools like Peec AI track brand visibility across ten AI engines simultaneously

- Citation tracking combined with on-site conversion data is the minimum viable measurement framework

- Operators who can’t measure AI search performance can’t optimize or report it

The measurement gap is as much a strategic liability as the content gap.

Is Your Organization Ready to Build AI Search Content Infrastructure?

The strategic question isn’t whether an AI search content strategy for multi-location businesses matters. The data is settled. The question is whether your organization builds the infrastructure now, during the first-mover window, or waits until competitive pressure forces a more expensive remediation.

The First-Mover Window Is Closing

AI citation patterns show strong compounding dynamics — established sources get cited again, and newer entrants have to displace them. AI Overview appearance rates more than doubled in a single year, from 6.49% to 13.14%, while click-through rates for cited pages fell from 15% to 8%.

- Less than 5% of healthcare practices are currently optimizing for AI search citations

- Less than 10% of legal firms are tracking AI referral traffic

- Home services are nearly uncontested in AI citation optimization

- Implementation takes 3-6 months — organizations starting now build positions that are structurally harder to displace

The window isn’t permanent, and citation radii are tightening.

Done-For-You vs. System Build — Choosing Your Path

Content Ops Lab delivers AI search content infrastructure through two engagement models. Done-For-You is a fully managed service: research, generation, verification, and optimization handled end-to-end, with 20-50+ articles monthly delivered publish-ready and compliant. System Build is a 12-week implementation that hands your internal team a fully operational production system they own outright.

- Done-For-You is right for operators who need immediate production without internal bandwidth

- System Build is right for operators who want a permanent internal capability with minimal ongoing vendor dependency

- Both models deliver the same content standards and production infrastructure

- The engagement model choice is operational, not strategic

The production system is the same either way.

What the First 90 Days Actually Look Like

The first 90 days establish the foundation for all subsequent production scales on: knowledge documentation, style guide integration, keyword research, and initial content architecture. Production begins scaling in Month 2, and by Month 3, the system is running at target velocity with embedded citation verification.

- Knowledge documentation is the highest-leverage first step — it prevents AI from writing from generic memory

- Early articles establish citation baseline data for benchmarking AI search performance

- Month 4+ is compounding production — the system runs without reinvention

- Multi-location operators running volume-first programs see immediate quality differentiation in the first article batch

The infrastructure built in the first 90 days separates compounding authority from compounding content debt.

How Content Ops Lab Builds Content Infrastructure

Content Ops Lab’s production methodology was built and iterated inside a 12-location regulated healthcare organization over 23 months — not designed in a pitch deck. The results: 1,000+ citation-verified articles with zero compliance violations, AI search converting at 20-32% against a 3.32% site baseline, and organic search delivering 45% of all leads while outperforming paid search nearly 2:1.

- 23-month production test inside a 12-location regulated healthcare organization

- 1,000+ citation-verified articles and pages — zero compliance violations across the full engagement

- AI search CVR of 20-32% vs. 3.32% site baseline — 6.4x performance multiplier

- 45% of total leads from organic search, outperforming paid search nearly 2:1

- 653% impression growth and 1,700% click growth for an emerging brand in 14 months

- 887% ChatGPT traffic growth in 7 months (July 2025 – February 2026)

- 5x production scale achieved: 10 articles/month to 50+ without adding headcount

- Dual-brand methodology validated on both mature brand maintenance and emerging brand growth

The Content Ops Lab Production System

Every engagement runs on the same four-stage infrastructure that produced these results.

- Research: Verified sources before generation begins — no AI writing from memory or hallucinated citations

- Verification: Line-by-line citation cross-check with STAT vs. CLAIM labeling and full audit trail

- Optimization: Multi-platform simultaneous build — Google, ChatGPT, Perplexity, Claude, and Gemini

- Delivery: WordPress staging or Google Docs — publish-ready, Grammarly-reviewed, compliance-confirmed

The infrastructure is transferable. In a System Build engagement, your team owns it completely.

Ready to build a content infrastructure that scales without the compliance risk? Get in touch — we’ll assess your current content operation and outline what a systematic approach would look like for your organization.

FAQs About AI Search Content Strategy for Multi-Location Businesses

Can’t we update our existing blog posts to rank in AI search results?

Updating existing posts helps, but it isn’t sufficient on its own. AI search rewards structural formatting, early-page answer placement, and domain-level topical coherence — not keyword refreshes. Most existing archives lack the question-based architecture, tight chunking, and citation verification that AI retrieval layers prioritize. Without a systematic production infrastructure, posts drift back toward thin content as new articles are added without consistent standards.

How long does it take to see AI search citations from a systematic content approach?

AI citation visibility typically builds in Months 2-3 as structured content accumulates and entity signals strengthen. Measurable referral traffic generally appears in the 3-6 month range, with conversion data becoming statistically meaningful around Month 6. Operators who start now are positioned to capture first-mover advantage before competitive intensity increases significantly.

How does a systematic AI search content strategy for multi-location businesses reduce compliance risk in regulated industries?

The risk of hallucination arises when AI generates content without verified research inputs. A research-first workflow addresses this directly: every article begins with verified source material, every statistic is cross-checked with line numbers recorded, and every claim is labeled and traceable before publication. In a 23-month regulated healthcare engagement, this protocol delivered 1,000+ articles and pages with zero compliance violations.

How is AI search content infrastructure different from what a traditional SEO agency provides?

Traditional SEO agencies optimize for rankings — keyword density, link acquisition, and meta tags. AI search content infrastructure optimizes for citation selection: answer-first formatting, structural extractability, entity coherence, citation verification, and multi-platform presence. Most agencies don’t have research-first workflows or governance for regulated industries. The methodology difference is categorical, not incremental.

What does implementing an AI search content strategy for multi-location businesses actually require operationally?

Done-For-You handles research, generation, verification, and optimization end-to-end — right for operators who need immediate production without allocating internal resources. System Build delivers a 12-week implementation that your team owns completely with no ongoing vendor dependency. Both paths produce systematic, compliant, AI-optimized content at scale.

Key Takeaways

- AI search citation selection is the new performance standard — ranking for many keywords matters less than being one of three to five cited sources when AI constructs a high-value answer

- Answer-first formatting, early-page placement, and tight structural chunking are the primary signals AI retrieval layers use to select citation candidates — most existing content architectures don’t meet this bar

- Volume-first programs without governance create compounding liability: thin pages that compete with each other, dilute domain authority, and qualify for the lowest-quality ratings in Google’s evaluation framework

- A 23-month regulated healthcare production engagement produced an AI search CVR of 20-32% against a 3.32% site baseline — confirming that verified, structured content systematically outperforms generic organic traffic on conversion

- The first-mover window for AI citation dominance is measured in quarters, not years — citation patterns show compounding dynamics that reward early entrants and make displacement progressively harder

- Done-For-You and System Build both deliver the same production infrastructure — the choice is an operational fit question based on internal capacity and timeline

Build Content Infrastructure That Compounds: AI Search Content Strategy for Multi-Location Businesses

Zero-click search has grown 13 percentage points in a single year. AI Overviews reduce click-through rates for top-ranking pages by 58%. The operators winning in this environment aren’t publishing more — they’re publishing in a way that AI systems can retrieve, parse, and cite.

Content Ops Lab built this infrastructure inside a regulated healthcare operation at scale: 1,000+ articles, zero compliance violations, AI search converting at 6.4x the site baseline. The first-mover window for AI search content strategy for multi-location businesses is still open — but the citation radius is tightening.

The organizations that build now build a compounding advantage. The organizations that wait inherit a remediation project.

Related: Done-for-You vs In-House Content Systems – Which Scales for Multi-Location Brands?