Ranking vs Being Cited: What Actually Drives Visibility in AI Search?

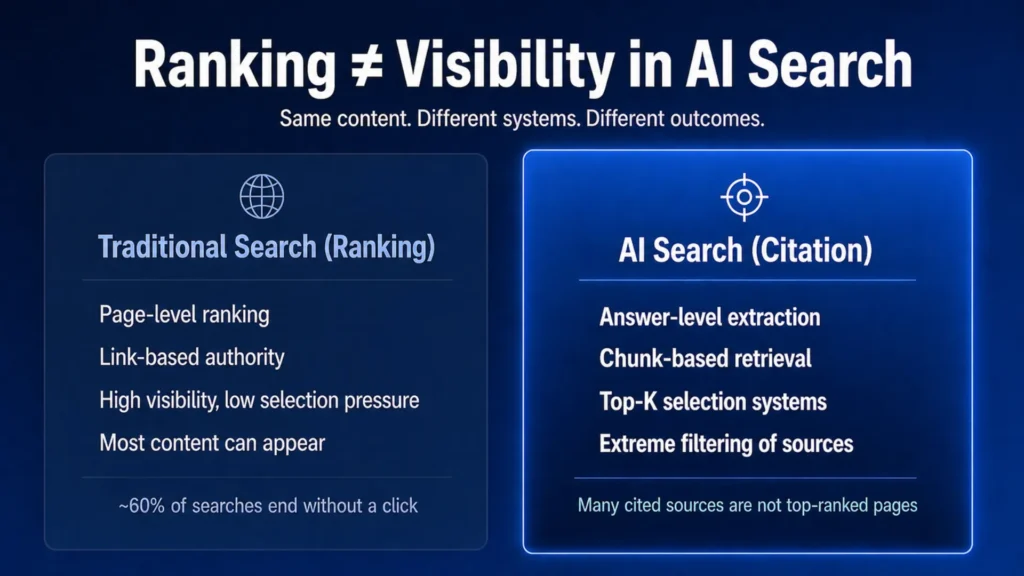

The difference between ranking vs being cited comes down to which system is selecting your content — and they operate on completely different logic. Traditional search rewards page-level authority signals accumulated over time. AI answer engines retrieve at the passage level, selecting sources based on extractability, answer structure, and citation credibility — independent of where those pages rank.

The proportion of consumers using AI to find local business recommendations has climbed from 6% in 2025 to 45% today, according to BrightLocal. For multi-location operators in healthcare, legal, and home services, that shift means your highest-intent buyers are researching inside systems that may never surface your content — regardless of your Google position.

Content Ops Lab built its citation infrastructure within a 12-location regulated healthcare organization over 23 months, with AI search traffic converting at an average of 21.4% — 6.4x the site baseline.

Related: AI Search vs Google Search – What Multi-Location Operators Need to Know

Why Doesn’t Ranking #1 in Google Mean You Get Cited by AI?

Ranking first in Google and appearing in an AI-generated answer are produced by two fundamentally different systems. Google scores pages. AI systems score passages. A page can rank in position one and still be invisible to every AI answer engine if its content isn’t structured for extraction.

How Google’s Page-Level Ranking Works

Google’s systems are “designed to work on the page level, using a variety of signals and systems to understand how to rank individual pages” — Google. What ranks well has accumulated the most authority signals over time, not what is most efficiently structured for direct answer extraction.

- Page-level signals: backlinks, domain authority, on-page relevance

- Crawl, index, and serve: Google’s three-stage pipeline

- Traditional SEO optimizes for page scoring, not passage extraction

- Ranking position reflects page authority within this pipeline

How AI Systems Retrieve at the Passage Level

AI answer engines break content into chunks of a few hundred tokens, score each independently, and retrieve only the top-scoring passages into the context window. Anthropic describes this as breaking content “into smaller chunks of text, usually no more than a few hundred tokens,” then adding “the top-K chunks to the prompt to generate the response” — Anthropic.

- Chunk-level scoring: each passage competes independently

- Top-K retrieval: only 3-20 passages typically enter the context window

- Dense paragraphs, buried answers, and weak headings reduce chunk scores

- Answer-first formatting increases passage-level retrievability

The 44% Gap No One Is Measuring

The relationship between ranking vs being cited is partial, not total. SeoClarity’s analysis of 362,000 keywords found 94% showed at least one overlap between AI Overview citations and the top 20 organic results — but 44% of AI Overview citations came from URLs outside the top 20 — seoClarity. These are separate competitions requiring separate strategies.

- 40% of AI-cited pages don’t rank in the top 10 Google results (Search Engine Land/Authoritas)

- 52% of AI Overview citations come from outside the top 100 organic results (Originality.ai)

- AI citation volatility is higher than organic ranking volatility

- Citation selection and organic ranking are related but independent systems

What Has Actually Changed About How Buyers Research Multi-Location Services?

The buyer research journey for high-value services has fundamentally shifted. Fewer users click through to websites after a search — more get their answer from an AI system that consolidates multiple sources before returning a recommendation.

Zero-Click Growth and What It Means for Organic Traffic

Zero-click search was already significant before AI Overviews. Since Google introduced them in May 2024, zero-click search grew 13 percentage points, from 56% to 69% by May 2025 — Similarweb via 4eBusinessMediaGroup. The pages still earning clicks are those appearing inside AI answers as cited sources.

- Organic traffic dropped from 2.3 billion peak visits to under 1.7 billion

- BrightEdge: AI Overviews now trigger on nearly half of all tracked queries

- Seer Interactive: organic results saw a 70% CTR drop in AI Overview environments

- Paid search dropped 12% CTR in the same environments

AI as Intent Filter for High-Value Queries

AI systems aren’t replacing all search — they’re intercepting the research phase. Nielsen Norman Group confirms generative AI “offers substantial shortcuts around the often tedious and time-consuming work required to research a topic” — Nielsen Norman Group. The users most likely to convert are now spending their research time inside AI systems, not on your website.

- Informational and comparative queries: increasingly answered by AI without a site visit

- Transactional queries (book appointment, find location): still routing through traditional search

- AI-referred traffic arrives pre-qualified — research phase completed before landing

- Session durations and conversion rates on AI-referred traffic consistently exceed organic averages

The Local Search Fragmentation Problem

Multi-location organizations face a structural disadvantage in AI citation selection. Fragmented location signals push AI engines toward national directories instead of first-party pages.

- Weak entity mapping: inconsistent NAP and schema prevent confident location-entity association

- Duplicate local pages: AI retrieval down-ranks near-identical “city + service” content

- Fragmented authority: links and reviews dispersed across subdomains dilute domain-level signals

- AI Overviews appeared for 68% of test local queries — local packs for only 39%—Search Engine Land

What Type of Content Ranks But Never Gets Cited?

Understanding the ranking vs being cited gap means identifying the structural characteristics that help content rank while simultaneously preventing AI extraction. The content most at risk was built for a retrieval system that no longer fully controls the buyer journey.

Keyword-First Architecture and Extractability Failure

Traditional SEO content is engineered for page-level keyword signals: broad coverage, distributed density, and long-form structure that builds toward conclusions. AI RAG systems need the most useful answer in the first passage scored, not buried in section four.

- AI systems disproportionately extract from the opening sections of pages

- Slow-build introductions consume context window space before reaching the answer

- Dense paragraph blocks without headings prevent clean chunk boundaries

- Broad topical coverage reduces passage-level relevance scores on any single query

Dense Paragraphs and Buried Answers

Content structure determines whether AI systems can surface the most useful passage. When answers are embedded within long paragraphs without clear headings, chunk-level scoring consistently yields lower scores.

- AI chunkers split content at fixed token counts, not semantic boundaries

- Pages with no clear formatting or hierarchy fail at both AI retrieval and user comprehension

- Nielsen Norman Group: users struggle to scan and extract from unstructured responses

- An optimal chunk balances sufficient context with minimal irrelevant noise

Duplicate Local Pages and AI Down-Ranking

For multi-location operators, the most common citation failure point is duplicate location pages. Near-identical “city + service” pages are considered low-value by both Google’s quality assessment and AI retrieval systems.

- Google’s Quality Rater Guidelines flag “auto-generated content with no editing or manual curation” as the lowest quality

- AI systems that inherit these quality signals are reluctant to cite templated location content

- Weak specificity at the passage level makes location pages poor candidates for top-K retrieval

- Each duplicate reduces the probability that any single page earns the citation slot

If your content is ranking but not being cited — or if your location pages aren’t appearing in AI answers for your highest-value service queries — Content Ops Lab builds the infrastructure to close that gap. Contact us to assess your current content architecture.

What Does Content That Gets Cited Actually Look Like?

AI citation selection rewards specific structural characteristics distinct from traditional SEO signals. Building those characteristics into production at scale is the core competency separating citation-optimized content from content that merely ranks.

Answer-First Formatting and the 44% Positional Bias

AI systems extract disproportionately from the opening content of any page or passage. An analysis of 1.2 million ChatGPT citations found 44% came from the first 30% of content — ALM Corp. Every article, every H2 section, every FAQ answer needs the most useful content first.

- Lead with a direct, 40-60-word answer before any setup or context

- Place the most extractable content in the first passage of every section

- Use H2 and H3 headers that mirror conversational query patterns

- The answer-first structure serves AI retrieval and human readability simultaneously

Structured, Source-Backed, and Chunking-Ready

Content that consistently earns AI citations shares three structural characteristics: clean heading hierarchy, bullet-heavy formatting (40-60%), and verified source citations that signal factual density to AI reranking systems.

- Clean H2/H3 hierarchy: creates semantic chunk boundaries for RAG pipelines

- 40-60% bullet ratio: enables AI parsing without sacrificing substantive depth

- Verified citations in-text: sourced statistical claims increase citation confidence

- Short, defined answers per section: reduces noise-to-signal ratio in chunk scoring

Citation Verification as a Credibility Signal

AI answer engines favor authoritative sources — and that authority is increasingly determined by citation stability over time. BrightEdge data shows that the top 1% of domains capture 64% of all AI citations, while only 0.4% of domains gain new citations in any given week. [STAT] — BrightEdge

- Fabricated or hallucinated citations signal low credibility to AI reranking systems

- Verified, sourced claims increase passage-level confidence scores

- Compliance-grade citation standards align directly with AI citation credibility requirements

- Citation verification infrastructure is a prerequisite for systematic AI search performance

Related: How AI Search Engines Decide Which Sources to Cite

How Does Early AI Citation Performance Compound Over Time?

AI citation behavior isn’t a level playing field that resets weekly. A small number of domains capture the vast majority of citations — and that concentration is growing tighter, not redistributing to new entrants.

Citation Concentration in a Small Domain Set

BrightEdge’s week-to-week analysis documents extreme concentration: the top 1% of domains capture 64% of all citations, the top 5% capture 78%, and the top 10% capture 84%. Only 0.4% of domains gained new citations in a given week — BrightEdge.

Multi-location operators not currently appearing in AI answers face an increasingly difficult barrier to entry as the pool consolidates.

- Citation distribution is consolidating, not expanding to new entrants

- When changes occur, they are overwhelmingly losses — not new entrants gaining share

- AI engines are tightening citation radius: more selective, not more exploratory

- Insidea’s ChatGPT analysis: top 50 domains account for nearly 48% of all citations

The Feedback Loop That Locks In Early Movers

Domains that are frequently cited gain increased retrieval probability, more brand familiarity signals, and more off-page references — all reinforcing the authority signals that drive future citation selection. The window for establishing early-mover positions is measured in quarters, not years.

- Frequent citation → higher retrieval probability in future queries

- Off-page brand signals grow as AI-referred traffic increases site engagement

- BrightEdge: 70x volatility gap between frequently cited and rarely cited domains

- Early citation dominance is structurally self-reinforcing

What the Production Data Shows

A 23-month engagement inside a 12-location regulated healthcare organization demonstrates the feedback loop in practice. AI search traffic — under 0.3% of total sessions — converted at 21.4% average versus a 3.32% site baseline: a 6.4x performance multiplier. ChatGPT sessions grew 887% in 7 months, with peak conversion rates reaching 40%.

- AI-referred traffic arrives pre-qualified — research phase completed before landing

- Session duration 2:30-4:20 vs. 1:30-2:55 site average

- Conversion rate trajectory increased month-over-month as citation volume grew

- <0.3% of total traffic delivering disproportionate conversion share

How Should a VP of Marketing Rebalance the Measurement Framework?

A standard measurement infrastructure was built for a different search environment. Position tracking and GA4 session counts don’t surface the ranking vs. being cited gap—and they actively misrepresent the scale of AI-referred traffic.

Four Distinct KPIs for the Modern Search Environment

Marketing leaders need four separate measurement tracks — not a unified organic traffic report that conflates all search-derived visits.

- Ranking visibility: position tracking in classic organic and local pack — necessary but no longer sufficient

- Citation visibility: frequency of citations in AI answers across ChatGPT, Perplexity, Gemini, and Google AI Mode

- Traffic volume: session counts with GA4 under-attribution acknowledged

- Conversion and assisted impact: leads where AI engines influenced the research phase, even if the final touch is organic or direct

Why GA4 Is Undercounting Your AI Traffic

When an AI platform sends a referrer header, GA4 records it under the generic Referral category. When the referrer is stripped, GA4 records the visit as Direct. In one 2026 dataset, 70.6% of AI-adjacent visits arrived with no referrer — MO Agency. One server log analysis found that GA4 captured only 9% of actual Gemini iOS visits.

- Google Search Console conflates AI Overview impressions with standard organic impressions

- Total AI traffic volume is systematically undercounted in current reporting

- Standard referral reports showing minimal AI sessions reflect attribution failure, not channel performance

- The decision to invest in citation infrastructure shouldn’t be made on data capturing less than 10% of the channel

Building the Business Case for Citation Infrastructure

The measurement gap creates a parallel communication challenge: making the case for citation-optimized content when standard reporting doesn’t expose the full return. The strongest business cases combine AI referral data, citation visibility from dedicated tools, and CVR benchmarks.

- Dedicated AI visibility tools (Profound, Surfer AI Tracker, Similarweb Rank Tracker) expose citation share independent of traffic volume

- AI Overview tracking shows which queries trigger AI answers — and whether your domain appears

- Even partial AI traffic data with above-average CVR demonstrates the channel’s per-visit value

- Citation infrastructure built now appreciates as the channel grows and competition intensifies

How Content Ops Lab Builds Content Infrastructure

A 12-location regulated healthcare organization produced 1,000+ citation-verified articles over 23 months with zero compliance violations — while AI search traffic converted at 21.4% average, 6.4x the site baseline. That production test is the foundation of the Content Ops Lab methodology.

- 23-month production engagement inside a 12-location regulated healthcare organization

- 1,000+ citation-verified articles and pages delivered with zero compliance violations

- 45% of all leads from organic search — outperforming paid search nearly 2:1

- AI search converting at 21.4% average vs. 3.32% site baseline — 6.4x performance multiplier

- 653% impression growth and 1,700% click growth for an emerging brand in 14 months

- 5x production scale: 10 articles/month to 50+ without adding headcount

- 887% AI platform session growth in 7 months across tracked channels

- Dual-brand methodology: proven on both mature brand maintenance and emerging brand growth

The Content Ops Lab Production System

Every engagement runs through the same four-stage infrastructure — research-first, verification before generation, and multi-platform optimization built into the workflow from the start.

- Research: Verified sources identified and documented before any generation begins

- Verification: Line-by-line citation cross-check, STAT vs CLAIM labeling, full audit trail

- Optimization: Multi-platform targeting — Google, ChatGPT, Perplexity, Claude, and Gemini simultaneously

- Delivery: WordPress staging or Google Docs — publish-ready, compliance-reviewed, and complete

The gap between ranking vs being cited closes when the same infrastructure that produces compliant, verifiable content also produces content structured for passage-level extraction across every AI engine simultaneously.

Ready to close the gap between ranking and being cited? Get in touch today — we’ll assess your current content architecture and outline what citation infrastructure would look like for your organization.

FAQs About Ranking vs Being Cited

If we’re already ranking on page one, why does ranking vs being cited require a separate strategy?

Independent systems produce page-one rankings and AI citations. SeoClarity data shows 44% of AI Overview citations come from URLs outside the top 20 organic results. Ranking remains necessary for broad organic visibility, but it doesn’t guarantee citation in the AI answer layer where pre-qualified, high-intent buyers do their research. These are separate competitions with separate optimization requirements.

How long does it take for citation-optimized content to start appearing in AI answers?

In production environments with consistent output (20-50+ articles/month), early AI citation signals typically appear within 3-6 months, with compounding effects building over 6-12 months. BrightEdge data shows AI citation pools are consolidating rather than expanding — the earlier citation infrastructure is established, the stronger the compounding advantage becomes.

How does ranking vs being cited affect compliance strategy in regulated industries like healthcare or legal?

Answer-first formatting and compliance are fully compatible. When every claim traces to a verified, line-numbered source, fabricated statistics and hallucinated citations are eliminated by design. In 23 months of production across a 12-location regulated healthcare organization, this methodology produced 1,000+ articles with zero compliance violations.

How is optimizing for AI citations different from traditional SEO or featured snippet optimization?

Featured snippet and AI citation optimization share some surface characteristics — both reward answer-first formatting. AI citations are selected at the passage level by RAG pipelines operating across multiple engines simultaneously, with authority filters that go beyond page-level signals. The top 1% of domains capture 64% of citations across engines. Passage-level structure, verified source attribution, and multi-platform formatting are all required.

Is a Done-For-You approach or a System Build better for closing the ranking vs being cited gap?

Done-For-You delivers citation-optimized content immediately — production begins within weeks. System Build transfers the infrastructure to your team over 12 weeks with 90 days of post-launch support, and is the stronger long-term investment for organizations that want full ownership. For brands with an urgent first-mover window and no internal content infrastructure, Done-For-You closes the gap faster.

Key Takeaways

- Ranking in Google and being cited by AI systems are independent outcomes — 40-50% of AI-cited pages don’t rank in the top 10 organic results

- AI answer engines retrieve at the passage level — answer-first formatting, clean heading structure, and 40-60% bullet ratio are citation prerequisites

- Zero-click search reached 69% of Google queries by May 2025, and AI-referred traffic converts at 6.4x the site baseline in production environments

- Citation concentration is tightening: the top 1% of domains capture 64% of all AI citations, and only 0.4% of domains gain new citations weekly — the first-mover window is measured in quarters

- GA4 systematically undercounts AI traffic — one server-log study found GA4 captured only 9% of actual Gemini visits — meaning standard reporting masks the scale of the opportunity

- Multi-location operators face a structural citation disadvantage from duplicate location pages, fragmented authority, and weak entity signals — all addressable through systematic content infrastructure

- Content that earns AI citations requires verified source attribution, passage-level extractability, and consistent production volume — not just keyword optimization

Why Ranking vs Being Cited Requires Systems, Not Shortcuts

The gap between ranking and being cited isn’t a content quality problem — it’s an infrastructure problem. AI systems select citations based on passage-level structure, verified authority, and answer-first formatting at a scale that requires systematic production rather than one-off optimization. The proportion of consumers using AI for local business recommendations climbed from 6% to 45% in under a year — BrightLocal.

Operators who build citation infrastructure before their category consolidates will hold those positions at lower marginal cost than those who enter after the competitive window narrows. Content Ops Lab’s methodology was built and tested in exactly that environment — 23 months of production in a regulated industry, producing content that ranks in traditional search and earns AI citations simultaneously.

The system exists. The question is whether you build it before or after your competitors do.

Related: How Do You Measure Success in AI Search Beyond Rankings and Traffic?