What Is the AI Search Content Gap for Multi-Location Businesses?

The AI search content gap is the disconnect between content that ranks in Google and content that gets cited by AI search systems — and for multi-location businesses, it’s wider than it appears. Being indexed creates eligibility. It doesn’t create citations. AI systems like ChatGPT, Perplexity, and Google AI Overviews run a separate selection process — filtering hundreds of thousands of indexed pages down to 3–8 visible sources per answer.

Across 57,000+ URLs analyzed, researchers found an undeniable pattern: the pages cited in AI Overviews are significantly denser with information than those ignored — Surfer. For operators managing 5–15 locations, the gap compounds at scale — thin location pages, fragmented entity signals, and unverified content all widen it.

Content Ops Lab’s production methodology was developed inside a 23-month regulated-industry deployment specifically to close it.

Related: The AI Citation Economy – Why Visibility Matters More Than Rankings

Why Does Ranking on Google No Longer Guarantee Visibility in AI Search?

Ranking on Google and being cited by AI search are two different outcomes with two different requirements. Google confirms that to be eligible as a supporting link in AI Overviews or AI Mode, a page must be indexed and snippet-eligible — and that “there are no additional technical requirements” — Google Search Central. Eligibility and selection are entirely separate problems. Most indexed pages qualify in principle and are never chosen.

The Indexed-vs-Cited Gap

Indexing is the floor, not the ceiling. The distinction that matters is whether a page is citation-worthy — not just crawlable.

- AI systems retrieve a candidate set, rank for trust and relevance, then select a small subset

- Google’s AI Overviews trigger on a minority of queries — one study found just 7.47% of searches produce them SE Ranking

- Of those that do trigger, the average visible pre-click citation count is 2.5 links

- Being indexed puts you in the pool; being citation-worthy gets you into the answer

Traditional SEO optimizes for ranking. AI search optimization requires a different standard: content that is structured, verified, and sufficiently extractable to survive a multi-stage selection filter.

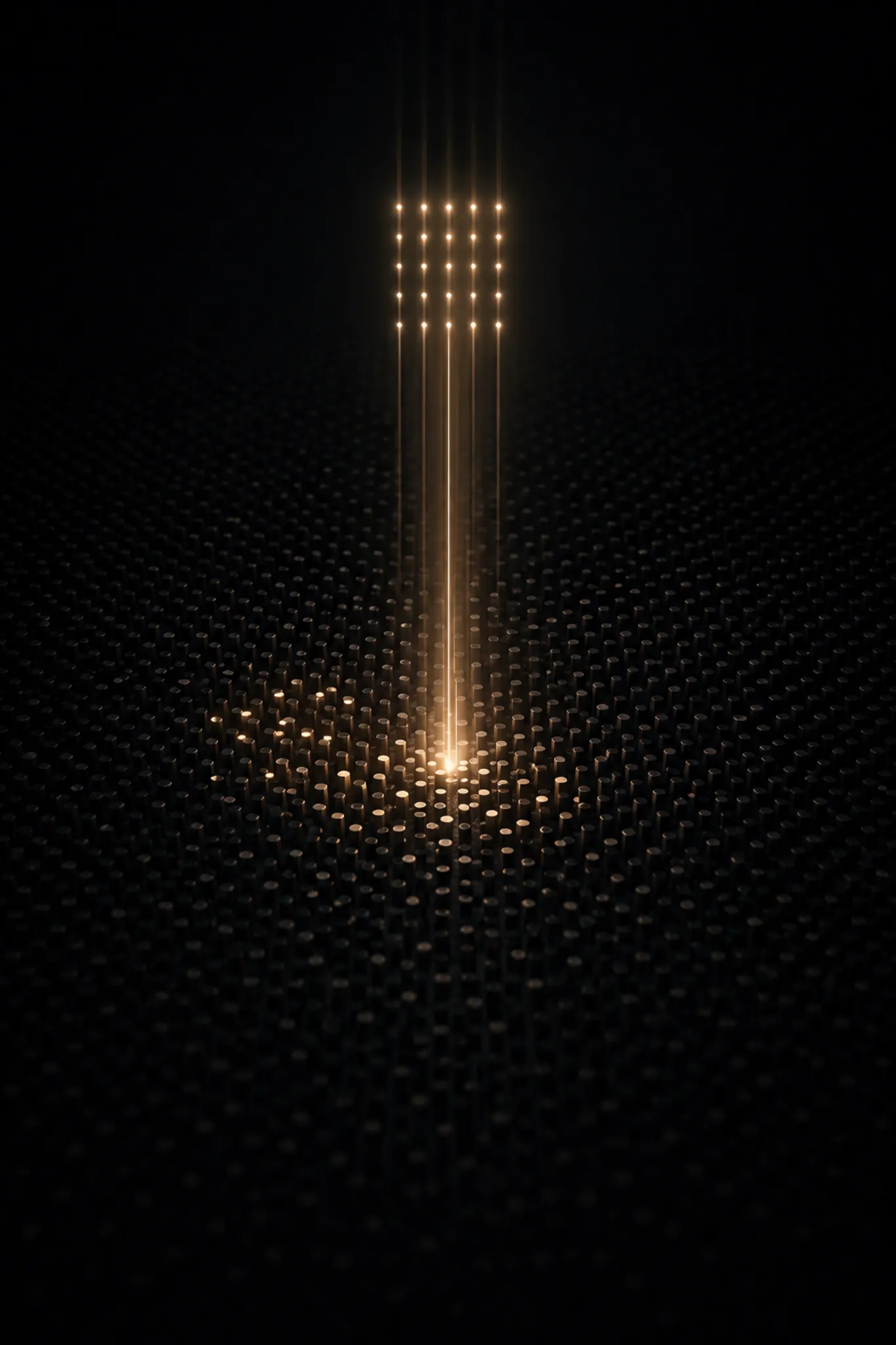

Citation Slot Scarcity

The math is straightforward, and it doesn’t favor generic content.

- Google’s index holds approximately 400 billion documents

- Competitive queries in healthcare, legal, and home services see tens of thousands of relevant pages

- AI Overviews typically expose 3–8 sources in the initial view, with a maximum of low double digits after expansion

- 72% of AI Overview instances link to just 1–4 domains from the top 10 organic results

That compression — from hundreds of thousands of indexed pages down to a handful of visible citations — is a selection problem, and most content wasn’t built to compete at that level.

How Similar Content Collapses Into One Answer

AI systems are designed to synthesize, not surface. That design creates a specific failure mode for operators publishing location-scaled content.

- Google AI scans sources, identifies shared facts and consistent explanations, then compresses them into a single structured summary

- Pages that repeat the same general information as competitors confirm the consensus, but don’t earn a citation slot

- Once the system has enough sources to validate a fact pattern, additional confirming pages are structurally redundant

- Differentiated, verifiable, information-dense content is the only way to avoid the consensus collapse

A strategy built on volume and topical coverage no longer produces proportional visibility. AI answers one question at a time, then moves on.

What Makes Most Multi-Location Content Structurally Invisible to AI Systems?

Most content published by multi-location businesses was built for a different search environment — one where topical coverage and keyword presence were sufficient. Three structural failure modes account for the majority of the gap.

Thin Location Page Failure

Location pages are the most common content liability in multi-location operations. Google’s own Helpful Content guidance explicitly flags “mainly summarizing what others have to say without adding much value” as a pattern its systems are designed to identify and deprioritize — Google Search Central.

- Service-area pages with 80%+ boilerplate content are technically indexed but structurally thin

- AI systems favor information-dense pages; thin location pages offer nothing the system can’t source elsewhere

- Location pages need unique value signals: local reviews, neighborhood references, location-specific service data

- Pages built on swapped-out keywords meet the thin-content description exactly

Entity Signal Fragmentation

Multi-location businesses carry a compounding liability single-location operators don’t face: entity clarity at scale.

- Each location must read as a distinct, well-modeled entity — not a variant of a corporate template

- NAP inconsistencies across GBP profiles, location pages, and directory listings fragment the entity signal

- Misaligned URLs, inconsistent service coverage descriptions, and review fragmentation reduce AI confidence in the entity

- Every location needs its own clean, consistent, cross-linked presence — not a copy of the corporate page with a different zip code

YMYL Trust Penalties

Healthcare, legal, and financial service operators face a structural disadvantage that goes beyond content quality.

- AI systems apply heightened trust standards to YMYL topics — categories where misinformation carries real-world consequences

- In YMYL categories, AI citation patterns heavily favor institutionally trusted domains: NIH, major medical centers, government sites, and established legal publishers

- BrightEdge’s 16-month study found healthcare AI Overview citations overlap with organic rankings at 75.3% — Google overwhelmingly prefers already-ranked, high-trust sources in this category — BrightEdge

- Meeting the YMYL citation bar requires verified claims, credible sourcing, and a content architecture designed to signal authority

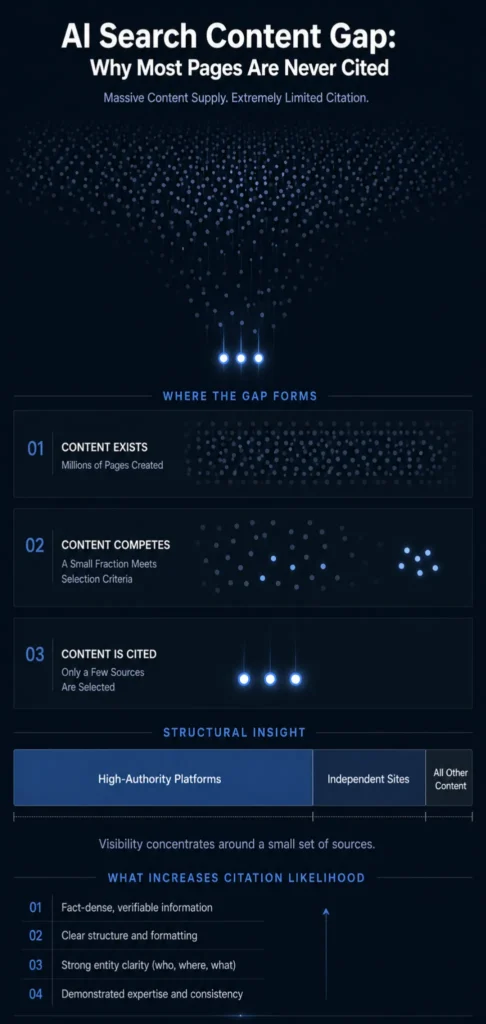

What Does the AI Citation Landscape Actually Look Like?

The competitive reality of AI citations is more concentrated than most operators realize. Understanding the actual distribution of citation share clarifies why the content gap is structural — and why incremental improvements to existing content strategies won’t close it.

Domain Concentration Reality

Surfer’s AI Citation Report — analyzing 36 million AI Overviews and 46 million citations between March and August 2025 — found that across nearly every industry, three domains dominate AI’s citations: YouTube (~23.3%), Wikipedia (~18.4%), and Google.com (~16.4%) — Surfer. Nearly 30% of all Google AI Overview citations go to the top 50 domains on the search engine.

- AI citation share is heavily concentrated in a small set of high-authority domains

- In YMYL verticals, institutional publishers hold structural advantages that volume won’t overcome

- Most mid-market multi-location operators are competing for the remaining 10-20% of citation share

- That remaining share is where systematic, information-dense, citation-ready content can win

How AI Selects Its Sources

AI systems don’t select sources solely on ranking. The selection process is multi-stage, and each stage filters in ways that differ from traditional search.

- Retrieval stage: AI issues multiple related queries, pulls a candidate set, and ranks for relevance and trust

- Quality assessment: Content evaluated for information density, structural extractability, authorship credibility, and citation backing

- Reranking: Engagement signals, domain authority, and content structure influence final selection

- Only the highest-scoring pages from the reranked set appear in the visible answer

For Perplexity specifically, the process is described as a “Citation Gauntlet Model” — binary, not graduated. Content either passes all selection gates and earns a citation, or it’s invisible.

Where Multi-Location Brands Stand

Most location-level content is thin, boilerplate-heavy, and structurally indistinguishable from competitors. Without verifiable claims, answer-first formatting, and structured extraction signals, content can’t compete in the reranking stage.

- Most multi-location competitors haven’t addressed this gap either

- The window to build citation authority before the category becomes fully competitive is still open — but narrowing

- The operators who move first establish citation patterns that AI systems reinforce over time

If your operation needs to produce 20-50+ articles per month without sacrificing compliance or quality, Content Ops Lab builds the infrastructure to make that possible. Contact us to discuss your content production requirements.

What Does Citation-Ready Content Actually Require?

Closing the AI search content gap isn’t a writing problem. It’s an infrastructure problem. Citation-ready content has specific structural requirements that generic SEO content doesn’t meet.

Information Density Over Word Count

The most important finding from large-scale AI citation research: AI systems reward facts, not length.

- Surfer’s analysis of 57,000+ URLs found that cited pages are significantly denser with information than those ignored — the answer is “more facts,” not more words — Surfer

- Information density means original data, explicit sourcing, specific statistics, and verifiable claims

- Generic long-form content that summarizes existing positions without adding original analysis doesn’t meet the density threshold

- More thin content produces no incremental citation share — the research is consistent on this point

Extractable Structure

Structure is a citation requirement, not an aesthetic preference. Google’s AI features documentation explicitly advises creators to ensure important content is available in textual form and that structured data aligns with on-page text.

- Answer-first formatting — direct responses to the H2 question in 40-60 words — provides the citation-ready snippet AI systems can surface

- 40-60% bullet-heavy content enables parsing across platforms; dense paragraph blocks resist extraction

- FAQ sections with question-form H3S and direct answers are among the highest-cited structural patterns in AI Overview research

- Alignment between visible content, structural markup, and semantic clarity is what makes a page machine-readable at the citation scale

Verification as a Trust Signal

Content that can’t be verified can’t be trusted by AI systems designed to avoid hallucinations — particularly in YMYL categories. Stanford HAI research found that legal hallucinations occurred at rates of 69–88% in general-purpose AI models responding to legal queries — Stanford HAI.

- AI search systems are incentivized to select sources that are maximally factual and auditable

- Vague, promotional, or derivative content offers no protection against hallucination and is structurally deprioritized

- Verified content means every statistic is traced to a source, with citation backing embedded in the content architecture

- Verification at scale requires a production infrastructure, not an editorial preference

Related: How AI Search Engines Decide Which Sources to Cite

How Is AI Search Changing the Lead Generation Equation for Multi-Location Operators?

The AI search content gap directly impacts conversions and revenue. The operators who close the gap now are capturing a channel that converts at multiples of the site baseline. The operators who wait are watching that window compress.

Consumer Adoption Acceleration

AI as a local search tool is no longer an emerging behavior. BrightLocal’s 2026 Local Consumer Review Survey found that 45% of consumers have used AI tools like ChatGPT, Gemini, or Perplexity to find local business recommendations in the past year — a jump from just 6% the year before — BrightLocal.

- AI is now the third most-used platform for local recommendations, behind only Google and Facebook

- That 39-percentage-point increase in one year represents a channel shift, not a trend

- The businesses appearing in AI answers when consumers search are the ones with citation-ready content already in place

Conversion Rate Differential

The reason AI search citations matter isn’t just visibility — it’s the quality of the traffic they deliver.

- A 23-month regulated-industry production deployment tracked AI search CVR at 21.4% average over 8 months vs. 3.32% site baseline — a 6.4x performance multiplier

- ChatGPT referral traffic peaked at 40% CVR in a single month — driven by pre-qualified intent and trust transfer from the AI recommendation

- Session durations from AI referral traffic run 2:30–4:20 vs. 1:30–2:55 site average, confirming deeper pre-arrival engagement

- The conversion premium is structural: users arrive after the AI has already completed the qualification process

The First-Mover Window

887% ChatGPT traffic growth over 7 months in a single regulated-industry deployment validates one thing: the channel is still in its early growth phase.

- AI citation dominance compounds — systems reinforce existing citation patterns, favoring already-cited sources over time

- The window to establish early citation authority is measured in quarters, not years

- Competitive intensity in AI search citations is rising as SEO platforms add citation tracking

- The operators building a systematic content infrastructure now are establishing a position before the category is fully competitive

Is Your Current Content Infrastructure Built to Close the AI Search Content Gap?

Most multi-location content programs weren’t designed with AI citation requirements in mind. The question isn’t whether your current content strategy is good. It’s whether it was built for the search environment you’re operating in now.

Compliance Risk in YMYL Content

The compliance dimension of the AI search content gap is often the last thing operators consider — and the first thing that creates liability.

- In healthcare and legal content, a single unverified statistic can cascade across dozens of location pages simultaneously if the production system isn’t designed to catch it

- Generic AI content tools generate claims without verification — no audit trail, no compliance protection

- YMYL citation eligibility requires content that is maximally factual and auditable; content that can’t be verified in production can’t be trusted at the citation scale

- Even specialized RAG-based legal research tools hallucinated more than 17% of the time in Stanford research — general-purpose tools are worse

The Build-vs-Buy Question

Operators evaluating how to close the AI search content gap face an infrastructure decision, not just a content decision.

- Internal teams can typically produce 4-8 articles per month before quality degrades — insufficient for citation authority across 5+ locations

- Traditional agencies deliver content optimized for the 2019-era SEO; most have no systematic verification infrastructure and no AI citation optimization methodology

- Generic AI content tools accelerate production but introduce hallucination risk and produce undifferentiated output that doesn’t meet citation eligibility requirements

- The choice is between building a systematic content infrastructure and continuing with tools that weren’t built for AI search

What a Systematic Approach Requires

Closing the AI search content gap at a multi-location scale requires four capabilities that most operators don’t currently have.

- Research-first methodology: verified sources before AI generation, not AI writing from memory

- Citation verification infrastructure: line-by-line cross-checking, STAT vs. CLAIM labeling, audit trail for every data point

- Structured content architecture: answer-first formatting, question-form H2s, extractable bullet structure across all content

- Multi-platform optimization: simultaneous targeting of Google, ChatGPT, Perplexity, Claude, and Gemini — each with different citation selection criteria

How Content Ops Lab Builds Content Infrastructure

A 23-month regulated-industry production deployment — 1,000+ citation-verified articles, zero compliance violations — produced the methodology behind everything Content Ops Lab builds. AI search traffic from that deployment converted at an average of 21.4% over 8 months vs. a 3.32% site baseline, for a 6.4x performance multiplier driven by content architecture, not ad spend.

- 23-month production test inside a 12-location regulated healthcare organization

- 1,000+ citation-verified articles and pages delivered with zero compliance violations

- 45% of all leads from organic search — outperforming paid search nearly 2:1

- AI search CVR of 21.4% vs. 3.32% site baseline — 6.4x performance multiplier

- 653% impression growth and 1,700% click growth for an emerging brand in the same organization (14-month period)

- 5x production scale: 10 articles/month to 50+ without adding headcount

- 887% ChatGPT traffic growth in 7 months (July 2025–February 2026)

- Dual-brand methodology: validated on both mature brand maintenance and aggressive emerging-brand growth

The Content Ops Lab Production System

The same four-stage system that produced those results runs on every client engagement.

- Research: Verified sources and credible citations before generation — AI never writes from memory

- Verification: Line-by-line citation cross-check, STAT vs. CLAIM labeling, full audit trail for every data point

- Optimization: Multi-platform targeting — Google, ChatGPT, Perplexity, Claude, and Gemini — built into every article

- Delivery: WordPress staging or Google Docs packaging — publish-ready, compliant, and reviewed before it leaves production

Any agency can use Claude or ChatGPT. Very few have built the verification infrastructure that makes AI-generated content defensible in regulated industries — and citation-worthy to the AI systems that select it.

Ready to build a content infrastructure that scales without the compliance risk? Get in touch — we’ll assess your current content operation and outline what a systematic approach would look like for your organization.

FAQs About the AI Search Content Gap

Why can’t we close the AI search content gap by publishing more articles?

Publishing more content doesn’t increase citation share if the content isn’t structurally eligible for citation. AI systems select 3–8 sources per answer from hundreds of thousands of indexed pages — the volume compounds the thin-content problem without meeting the information-density or extractability requirements that determine selection. The fix is content infrastructure, not content volume.

How long does it take to start appearing in AI search citations?

Operators starting with minimal AI search visibility typically see citations appear within 3–6 months of systematic production. The compounding effect accelerates from there — AI systems reinforce existing citation patterns, so early citations produce more citations. The longer the delay, the more ground competitors gain in a channel that rewards first movers.

Why is the AI search content gap especially dangerous for healthcare and legal operators?

YMYL categories carry heightened trust requirements — AI systems apply stricter source-selection standards to content that could affect health, financial stability, or legal outcomes. Healthcare and legal operators publishing unverified, generically structured content are both excluded from citations and exposed to compliance liability. A single fabricated statistic can cascade across dozens of location pages in a high-volume production environment.

How is AI-optimized content different from traditional SEO content?

Traditional SEO content optimizes for keyword density, topical coverage, and link signals — factors that determine ranking position. AI-optimized content optimizes for information density, structured extractability, citation verification, and entity clarity — factors that determine citation eligibility. A page can rank in positions 1–10 and never appear in an AI Overview. The production requirements are related but distinct.

Does Content Ops Lab’s Done-For-You model handle AI citation optimization, or is that a separate engagement?

AI citation optimization is built into the standard production methodology — not an add-on. Every article produced under the Done-For-You model follows the same research-first, citation-verified, multi-platform-optimized workflow that generated 21.4% CVR from AI search in the regulated-industry deployment. The System Build model transfers the same infrastructure to your internal team.

Key Takeaways

- The AI search content gap is structural: being indexed creates eligibility, not citation. AI systems select 3–8 sources from hundreds of thousands of indexed pages — content must meet information density and extractability requirements to compete.

- Most multi-location content fails AI citation selection for three reasons: thin location pages, fragmented entity signals, and insufficient verification for YMYL trust requirements.

- Citation share is highly concentrated — three domains account for 58% of AI Overview citations across industries. Multi-location operators are competing for the remaining share, and most haven’t built systematic infrastructure to capture it.

- Citation-ready content requires information density over word count, structured extractability, and verified claims with a full audit trail — requirements traditional SEO content wasn’t designed to meet.

- AI search traffic converts at multiples of the site baseline — a 23-month production deployment tracked 21.4% CVR vs. 3.32% site average. Consumer adoption of AI for local recommendations jumped from 6% to 45% in one year.

- The first-mover window is open but compressing. AI systems reinforce existing citation patterns — operators building infrastructure now establish position before the category becomes fully competitive.

Build Content Infrastructure That Compounds: AI Search Content Gap

The AI search content gap isn’t a future problem. AI is already the third-most-used platform for local business recommendations, and consumers who arrive via AI citations convert at rates that traditional organic traffic can’t match. Researchers confirmed across 57,000+ URLs that information-dense, structured, verifiable content earns citations — generic, thin content doesn’t.

The infrastructure for producing citation-ready content at a multi-location scale requires systematic research protocols, citation verification, and a structured content architecture built for AI extraction.

Content Ops Lab built that system across 23 months of regulated-industry production. The question is whether your content operation is built to compete in the search environment you’re operating in now.

Related: What Is Content Infrastructure for Multi-Location Brands?