Why AI Referrals Convert Better Than Regular Search

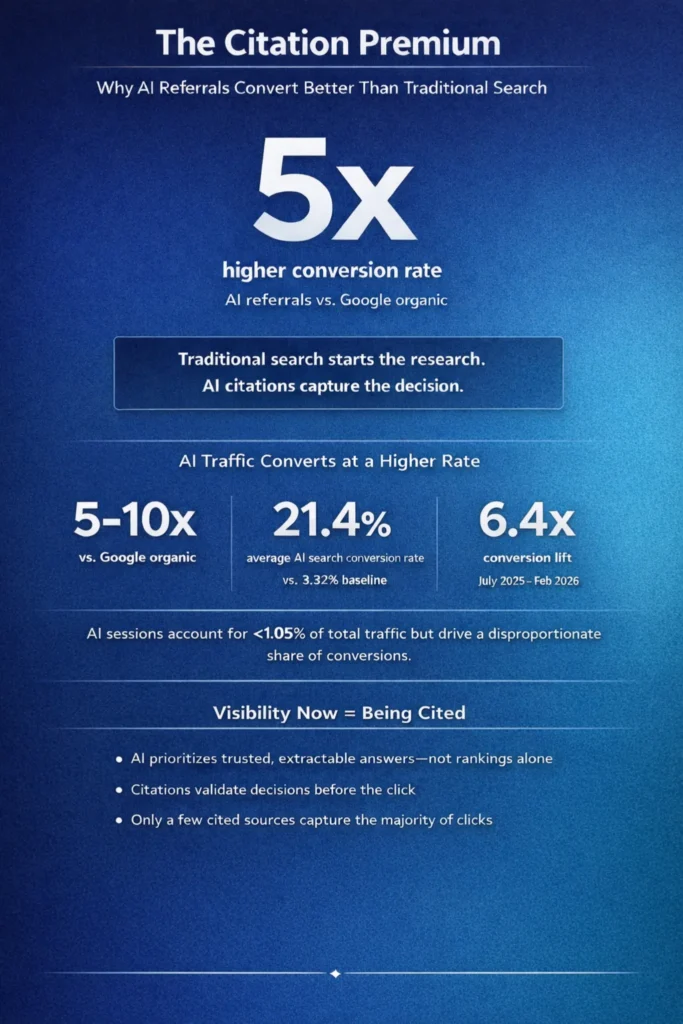

AI referral traffic converts at significantly higher rates than traditional organic search — not because AI platforms drive more volume, but because they pre-qualify visitors before the click. A 2026 benchmark analysis of 12.3 million visits across 347 businesses found that AI traffic converted at 14.2%, compared with Google’s 2.8%, a 5x performance gap.

For multi-location operators optimizing toward lead quality rather than raw session counts, that gap is the story. Content Ops Lab built its content production methodology inside a 12-location regulated healthcare organization — 95+ confirmed AI search conversions over eight months, at 21.4% average CVR against a 3.32% site baseline.

Related: Answer Engine Optimization: What Multi-Location Operators Need to Know

Why Are Traditional Search Metrics Misleading VP-Level Marketing Decisions?

Traditional organic search has been the default performance benchmark for content investment for two decades. The problem isn’t that organic traffic is bad — it’s that aggregate session volume obscures the distribution of intent within it. When a VP of Marketing evaluates content ROI on impressions and clicks alone, they’re measuring the wrong variable.

The Volume-Over-Quality Trap

High organic session counts look like content success on a dashboard. They rarely tell you what share of those sessions represented serious prospects versus casual browsers doing early-stage research.

- Most organic traffic represents informational intent — users gathering facts, not evaluating vendors

- Click volume rewards broad keyword coverage, not purchase-stage alignment

- Session counts from traditional search don’t distinguish between a researcher and a ready-to-book prospect

Traditional reporting treats all organic sessions as equivalent. They aren’t.

Intent Distribution Gaps in Organic Traffic

A 2025 analysis of over 50 million ChatGPT prompts found that in traditional search, informational queries dominated at 52.7%, with transactional intent at just 0.6% and commercial intent at 14.5%. The volume that fills most organic traffic reports is informational — people learning, not deciding. VP-level content investment decisions that rely on session volume as a proxy for ROI are measuring the noisiest part of the funnel.

What High-Volume Traffic Actually Costs

Volume-chasing content strategies require high article counts to maintain ranking positions, generate bounce traffic that inflates session numbers without conversion impact, and create a false sense of performance that justifies continued spend on low-intent channels. The gap between session count and conversion rate is where most content budgets leak. AI search data makes that gap visible in ways traditional reporting never did.

What Are the Real Conversion Differences Between AI Referrals and Organic Search?

Research on AI referral conversion is still in its early stages, and not every dataset tells the same story. The clearest picture comes from looking across multiple independent studies — accounting for where AI referrals outperform, where they don’t, and what the behavioral signals mean.

Cross-Industry Benchmark Data

Several independent studies now quantify the conversion gap between AI referral traffic and traditional organic search.

- SuperPrompt/Brandi.ai (347 businesses, 12.3M visits): AI traffic converted at 14.2% vs. Google’s 2.8% — a 5x gap — with AI visitors viewing 3.2x more pages and staying 4.1x longer per session

- Ahrefs internal data: AI traffic represented 0.5% of visits but drove 12.1% of new user signups — roughly 23x higher conversion than traditional search visitors

- Seer Interactive (B2B single-site): ChatGPT converted at 15.9%, Perplexity at 10.5%, Claude at 5%, Gemini at 3% — all significantly above Google organic at 1.76%

- First Page Sage (160+ companies): ChatGPT-influenced traffic consistently converts at higher rates than traditional SEO, with the largest gains in complex, considered-purchase verticals

The pattern across studies is consistent: AI referral traffic converts at multiples of organic search benchmarks.

Where AI Referrals Outperform — and Where They Don’t

The honest version of this data includes the countervailing evidence. A 2025 ecommerce study found that ChatGPT referral traffic accounted for just 0.2% of total sessions — roughly 200x lower than Google organic — and that affiliate and organic search still outperformed ChatGPT on conversion and revenue per session in that context.

AI referral performance varies by business model, funnel design, and the quality of content structured for AI-driven journeys. Businesses that have invested in generative engine optimization consistently see stronger AI referral performance than those that passively receive sporadic clicks.

Engagement Depth as a Leading Indicator

Conversion rate is one signal. Behavioral depth points in the same direction. AI-referred visitors view more pages, stay longer, and convert faster on the first session. Brandi.ai’s data shows that 73% of AI users who converted did so on their first visit, compared with 23% for Google users. Faster first-session conversion means lower nurture cost and shorter sales cycles — a unit economics story, not just a traffic story.

Why Do AI Platforms Pre-Qualify Traffic Before the Click?

The conversion premium isn’t accidental. It’s structural. AI search platforms fundamentally change where a click occurs in the decision process — and that changes who shows up on your location pages.

How Conversational Queries Compress the Funnel

Traditional keyword search captures intent at the moment of query. A user types “chiropractor near me,” gets a list of results, and begins their evaluation after clicking. The click is the start of research. In conversational AI, research is conducted within the platform.

Users submit detailed, multi-constraint queries — “evidence-based chiropractor experienced with whiplash after car accidents in [city], open evenings” — and the AI iterates through follow-up questions, pros-and-cons comparisons, and availability filtering before surfacing any recommendations.

- Conversational AI encourages users to layer constraints: budget, timing, location, problem subtype

- Multi-turn dialogue narrows from broad research to specific decisions

- By the time a citation appears, the user has already completed most of their evaluation

A click from a traditional search represents the early stage of the funnel. A click from a cited AI recommendation represents late funnel.

The Citation Premium Shortlist Effect

In traditional search, a business appears among ten or more results. In an AI-generated answer, the model typically cites two to four sources in a synthesized recommendation. Bruce Clay’s analysis notes that AI Overviews function like comprehensive featured snippets — pulling from multiple sources and elevating a small number of cited links within the answer itself.

- Being cited in an AI answer means competing among a handful of options, not dozens

- AI citations carry perceived endorsement weight — users treat them more like recommendations than neutral listings

- Around 90% of B2B buyers click through to cited sources in AI Overviews to validate before acting

Being one of three citations in an AI answer addressing a buyer’s specific problem is a categorically different position than ranking seventh on page one.

From Research Tool to Recommendation Engine

Nielsen Norman Group’s 2026 research documents how users increasingly treat AI systems as research assistants — posing complex tasks, reviewing synthesized answers, and clicking through only when ready to act.

The click isn’t the beginning of the research process. It’s the final confirmation step. AI-referred visitors often arrive having already understood the service category, compared alternatives, and self-selected for fit. The location page becomes the booking interface, not the education interface.

If your content strategy isn’t generating AI search citations, you’re leaving your highest-converting traffic channel unoptimized. Content Ops Lab builds the infrastructure to change that. Contact us today to discuss your content production requirements.

What Does the Citation Premium Look Like in a Live Production Environment?

Independent benchmark data establishes the pattern. Production data from a live multi-location deployment confirms it — and adds specificity that cross-industry averages can’t provide.

AI Search CVR vs. Site Baseline

Content Ops Lab’s methodology has been running in production inside a 12-location regulated healthcare organization for 23 months. Over eight months of tracked AI search performance (July 2025–February 2026), AI platforms delivered 95+ confirmed conversions at a 21.4% average CVR against a 3.32% site baseline — a 6.4x performance multiplier.

- AI search traffic represented less than 0.3% of total sessions

- That sub-0.3% delivered a disproportionate share of confirmed conversions

- Extended session durations (2:30–4:20 average) vs. site baseline (1:30–2:55) confirmed deeper engagement

Low volume, outsized conversion share. That’s the citation premium in production.

ChatGPT Traffic Growth Trajectory

ChatGPT accounts for approximately 80% of tracked AI search sessions in this deployment. The growth trajectory shows the channel in the early-growth phase, not maturity.

- July 2025: 8 sessions → February 2026: 79 sessions = 887% growth in 7 months

- CVR trajectory: 9.5% (August 2025) → 32.8% (December 2025) → 40.0% (January 2026) peak

- February 2026 maintained 19.23% CVR despite session volume fluctuation

887% channel growth on a conversion rate already 6x the site baseline is the compelling case for early investment.

Platform-by-Platform Conversion Breakdown

ChatGPT dominates volume, but it’s not the only platform generating citations. Perplexity delivered a 25.7% CVR during its peak period (July–October 2025). Claude, Gemini, and emerging platforms are beginning to appear in referral data.

The Seer Interactive B2B data mirrors this multi-platform pattern: ChatGPT at 15.9%, Perplexity at 10.5%, Claude at 5%, Gemini at 3% — all converting above Google organic’s 1.76%. Each platform operates on its own citation logic. Multi-platform optimization reduces concentration risk while expanding the total citation surface.

Related: Why Traditional SEO Alone No Longer Wins Informational Search

How Do You Build Content That Gets Cited by AI Systems?

Understanding why AI referrals convert at a premium is useful. Knowing how to earn those citations is the operational question. The answer is a content architecture built around how AI systems extract, evaluate, and surface information.

Structural Requirements for AI Extraction

AI systems prioritize content structured for extraction — answers that can be pulled cleanly, formatted for scanning, and attributed to a credible source.

- Answer-first H2 structure: 40-60-word direct answers to the section question before any supporting detail

- Question-based H2 formatting: Section headers written as the questions users actually ask AI platforms

- Bullet-heavy content (40-60% of body): Structured formatting that AI can parse and reassemble as synthesized answers

- Statistical backing: Verified citations from credible sources give AI systems confidence to include your content

Content that AI can easily extract gets cited. Content that requires interpretation rarely surfaces in AI answers.

Citation Verification as a Trust Signal

Most AI-generated content fails the citation standard because it’s written from the AI’s memory rather than from verified research. Fabricated statistics, hallucinated URLs, and unsourced claims can’t withstand the verification AI systems increasingly apply to sources they consider citing.

In regulated industries, the risk compounds: a single fabricated statistic can cascade across dozens of location pages simultaneously.

- AI systems reward content with credible, traceable citations

- Verified statistics from authoritative sources signal trustworthiness at the extraction layer

- Compliance-ready content performs better in AI citation precisely because it’s built to withstand scrutiny

Citation verification isn’t just a compliance requirement. It’s a competitive differentiator in the AI citation economy.

The First-Mover Window for Multi-Location Operators

BrightEdge data shows that AI Overviews now appear in over 11% of queries, with 49% year-over-year growth in complex queries that trigger these modules. Current AI adoption data show that 53% of consumers are experimenting with or regularly using generative AI, up from 38% in 2024. That adoption curve is moving faster than most content strategies are adapting.

- Less than 5% of healthcare practices are currently optimizing for AI search citations

- Early citation dominance compounds: AI systems reinforce existing citation patterns over time

- Implementation takes 3-6 months; the first-mover window is measured in quarters, not years

The operators building AI-optimized content infrastructure now will be significantly harder to displace than those who start in 12 months.

How Should Multi-Location Operators Track and Measure AI Referral Performance?

Tracking AI referral performance requires deliberate configuration. Most analytics setups undercount AI-driven journeys by default — and the gap between what’s measured and what actually converts is material.

Attribution Gaps in Current Analytics Stacks

AI platforms handle link referral inconsistently. Some pass clean referrer strings (“chat.openai.com,” “perplexity.ai”) that GA4 can classify if configured correctly. Others route sessions into “direct” or “(other)” channels without referrer data. Multi-touch journeys — where a user researches via AI, then later searches for the brand name — further complicate attribution.

- AI traffic is fragmented across the GA4 channel groupings without a custom configuration

- Total AI session volume is almost certainly undercounted in standard reporting setups

- Perplexity traffic in particular shows inconsistent tracking — total volume likely understated

Even organizations that are seeing meaningful AI CVR data are likely seeing only part of the picture.

Building an AI Referral Tracking Framework

A functional AI attribution setup requires deliberate configuration, not default GA4 behavior.

- Custom channel groupings: Capture known AI platform domains (chat.openai.com, perplexity.ai, claude.ai, gemini.google.com, copilot.microsoft.com)

- UTM parameters: Append UTM source tags to URLs appearing in AI citations for direct attribution

- Dedicated conversion goals: Segment AI referral traffic in conversion reporting, separate from organic and direct

- Regular manual testing: Query target keywords across major AI platforms quarterly; document citation presence and context

Infrastructure for tracking should be built before you need it. By the time AI search volume justifies the project, you’ve already lost months of performance data.

Balancing AI Optimization with Channel Diversification

AI search is the highest-converting channel in the production deployment above, and less than 0.3% of total traffic. Production data shows organic search delivering 45% of all leads, outperforming paid search nearly 2:1.

Pew/eMarketer data shows only 8% of users click a link when an AI Overview is present, versus 15% without one — meaning AI optimization and traditional SEO are complementary, not competing investments.

- Organic search remains the dominant lead volume channel; AI search is the highest-converting channel

- Over-indexing on AI citations at the expense of traditional SEO creates concentration risk

- The strongest content strategy earns both Google rankings and AI citations for the same content

How Content Ops Lab Builds Content Infrastructure That Earns AI Citations

Content Ops Lab built its methodology inside a 12-location regulated healthcare organization over 23 months of live production. The AI search data from that deployment — 21.4% average CVR, 6.4x the site baseline, 887% ChatGPT traffic growth in seven months — is production output from a system built to earn citations while maintaining compliance in a regulated industry.

- 23-month production test inside a 12-location regulated healthcare organization

- 1,000+ citation-verified articles and pages delivered with zero compliance violations

- 45% of all leads from organic search — outperforming paid search nearly 2:1

- AI search converting at 21.4% average CVR vs. 3.32% site baseline — 6.4x better

- 887% ChatGPT traffic growth in 7 months (July 2025–February 2026)

- 653% impression growth and 1,700% click growth for an emerging brand over 14 months

- 5x production scale: 10 articles/month to 50+ without adding headcount

- Dual-brand methodology validated on mature brand maintenance and aggressive growth scaling

The Content Ops Lab Production System

Citation-worthiness is built into the workflow at every stage — not appended at the end.

- Research: Verified sources pulled before generation — no AI writing from memory

- Verification: Line-by-line citation cross-check with STAT vs. CLAIM labeling and full audit trail

- Optimization: Multi-platform formatting for Google, ChatGPT, Perplexity, Claude, and Gemini simultaneously

- Delivery: WordPress staging or Google Docs packages — publish-ready, compliance-reviewed, graded

Content earns citations because it’s built to deserve them.

Ready to build a content infrastructure that captures the citation premium? Get in touch today — we’ll assess your current content operation and outline what a systematic approach would look like for your organization.

FAQs About the Citation Premium and AI Referral Conversion

How long does it take for AI search citations to start driving measurable traffic?

AI citation traffic builds as AI systems index and reinforce content patterns. Most organizations see initial citation appearances within 2-4 months of publishing structured, citation-ready content, with measurable traffic at 4-6 months. The full compounding effect develops over 6-12 months of consistent production. Given that implementation takes 3-6 months, the time to start is before your competitive window closes.

What content format does AI prefer to cite — and how is it different from traditional SEO?

AI systems prioritize extractability: question-based H2 headers, 40-60 word answer-first openings, bullet-heavy formatting (40-60% of body content), and statistically backed claims from credible sources. Traditional SEO optimizes for keyword density and link signals. These aren’t competing requirements — well-structured content performs on both dimensions simultaneously.

How does Content Ops Lab verify that content will be citation-worthy before publishing?

Every article goes through line-by-line citation verification — each statistic traced to its source document with exact quotes and an audit trail. Content is structured with answer-first formatting, question-based H2s, and 40-60% bullet ratios to meet AI extraction criteria. The verification protocol was built inside a regulated healthcare environment where fabricated claims carry real compliance consequences.

Is the citation premium real across all industries, or just specific verticals?

The strongest data comes from complex, considered-purchase verticals — healthcare, B2B SaaS, professional services — where AI pre-qualification does the most work before the click. The e-commerce study covered by Search Engine Land showed weaker performance in lower-consideration environments. Multi-location healthcare, legal, and home services operators are in the sweet spot: high-consideration, localized decisions where AI-driven research behavior is most pronounced.

How do you track conversions from AI platforms like ChatGPT and Perplexity in GA4?

Standard GA4 significantly undercounts AI referral traffic. Build custom channel groupings capturing known AI platform domains, create dedicated conversion goals segmented for AI referral traffic, and append UTM parameters to cited content URLs where possible. Start with manual platform audits — query your target keywords in ChatGPT, Perplexity, and Gemini — to establish a citation baseline before GA4 configuration work begins.

Key Takeaways

- AI referral traffic converts at 5-20x the rate of traditional organic search across multiple independent studies — the citation premium is structural, not a statistical anomaly

- The performance gap is explained by pre-qualification: AI platforms compress the upper and mid-funnel inside the conversation before any click occurs

- Production data from a 23-month regulated healthcare deployment confirms the pattern: 21.4% average AI search CVR vs. 3.32% site baseline, 887% ChatGPT traffic growth in 7 months

- Earning AI citations requires specific content architecture — question-based H2s, answer-first formatting, 40-60% bullet ratios, and verified citations from credible sources

- Standard GA4 setups undercount AI referral volume; build attribution infrastructure proactively, not retroactively

- Less than 5% of healthcare practices are currently optimizing for AI search citations — the first-mover window is open, but closing

Related: Generative Engine Optimization: How Brands Get Recommended by AI

The Operator’s Path Forward on the Citation Premium

The citation premium is documented across multiple independent datasets and confirmed in live production. Brandi.ai’s finding that AI-acquired customers show 67% higher lifetime value than Google-acquired customers means the advantage extends beyond the initial conversion. For multi-location operators, the strategic question isn’t whether AI referral traffic performs better — it’s whether your content infrastructure is built to earn those citations before your competitors are.

Content Ops Lab builds that infrastructure: research-first, citation-verified, and optimized for both traditional search and AI platforms. The organizations that invest now will be structurally harder to displace when mainstream adoption closes the first-mover window.