How AI Search Engines Decide Which Sources to Cite

Understanding how AI search engines decide which sources to cite comes down to four compressible factors: retrieval relevance, domain authority, content extractability, and data density. Research demonstrates that optimizing for these factors can boost visibility in generative engine responses by up to 40% — a performance gap that compounds over time as citation patterns stabilize.

For VP-level operators managing content across 5, 10, or 20+ locations, this isn’t an SEO footnote. It’s the difference between your organization getting cited when a high-intent prospect asks ChatGPT for a recommendation — or watching a competitor get the referral instead.

Content Ops Lab built its citation-first content infrastructure within a 12-location regulated healthcare organization, generating 95+ confirmed AI search conversions at an average conversion rate of 21.4% over 8 months — 6.4x the site average.

Related: SEO vs AEO vs GEO – How Multi-Location Businesses Should Think About Modern Search

Why Are AI Search Engines Deciding to Cite Your Competitors Instead of You?

AI search engines don’t rank websites. They select sources to ground answers — and the selection logic is categorically different from the algorithm that puts a blue link at position one. Most multi-location operators are running a content strategy designed for the 2019-era Google. That strategy doesn’t transfer to the citation economy.

How AI Citation Differs from Traditional Ranking

Traditional search ranking orders a list of links by relevance and authority so users can choose what to click. AI search reads dozens of pages, synthesizes a direct answer, and exposes a subset of those sources as citations — or incorporates information from pages that are never cited at all.

- Ranking #1 in Google does not guarantee an AI citation

- Content can influence AI answers without receiving a visible link

- Citations may drive no measurable traffic even when they appear

- A page ignored by traditional search can still be cited by Perplexity or ChatGPT

BrightEdge’s 16-month analysis found that overlap between AI Overview citations and organic rankings grew from 32.3% to 54.5% — meaning nearly half of AI-cited pages still come from outside the traditional top-10.

The Query Fan-Out Problem

Google’s own documentation states that AI Overviews and AI Mode may use a “query fan-out” technique — “issuing multiple related searches across subtopics and data sources” to develop a response. ChatGPT rewrites user queries into multiple targeted sub-queries. Claude generates iterative searches and refines based on previous outputs.

- A single user question triggers 3-10 sub-queries across engines

- Each sub-query evaluates a different content angle or subtopic

- Your content must be discoverable and extractable across multiple query variations

- Ranking for one keyword phrase doesn’t guarantee coverage across the fan

AI retrieval operates at the paragraph and answer-block level, pulling extractable content from wherever it exists in your indexed pages.

What Gets Left Out of AI Answers

AI systems apply proprietary filtering after retrieval — removing pages that are promotional in tone, thin on data, or structurally difficult to parse.

- Dense, unstructured prose without answer-first formatting

- Promotional copy that prioritizes features over factual claims

- Statistical assertions without cited sources

- Outdated content without visible refresh signals

A cross-vendor synthesis found that non-promotional tone is a positive citation signal, while heavily promotional copy shows a negative correlation. Marketing language designed for human readers actively undermines AI citation selection.

What Are the Real Options for Earning AI Search Citations at Scale?

The citation economy is real, and operators are responding with three broad approaches. Understanding the trade-offs is the starting point for an infrastructure decision that holds at 20, 50, or 100 articles per month.

Relying on Existing SEO Rankings

BrightEdge data confirms that over half of AI Overview citations come from pages that rank organically — but the overlap is incomplete and moving slowly.

- Organic ranking improves citation probability, but it doesn’t guarantee it

- Formatting and data density determine whether a ranking page gets cited

- Pages optimized only for traditional SEO often fail the extractability filter

- High-ranking but thin or promotional pages are increasingly bypassed

Relying on existing rankings without adapting the content architecture results in a passive AI search strategy. You may capture some citations incidentally. You won’t build a defensible citation position.

Third-Party Agency Approaches

Traditional content agencies have added “AI optimization” and “GEO” services to their pitch decks. Most are applying surface-level structural changes — FAQ sections, more bullet points — without the underlying research verification infrastructure that AI systems actually reward.

- FAQ schema and bullets are necessary but not sufficient

- Citation verification is absent from most agency workflows

- AI systems penalize fabricated or unsourced statistics — agencies without verification protocols create compliance risk

- Generic content at scale rarely achieves the data density and entity consistency that drives stable citation share

In regulated industries, the risk of hallucination in unverified AI content isn’t theoretical. It’s a concrete liability that compounds across every location page that carries a fabricated statistic.

Building Citation-First Infrastructure

The infrastructure approach treats AI citation as a primary KPI from the start — designing content architecture, research workflows, and quality control protocols around what citation systems actually reward.

- Research verification baked into production, not added after the fact

- Data density and external citations embedded at the structural level

- Entity consistency is maintained across location pages for brand mention correlation

- Content freshness protocols that preserve citation stability over time

The infrastructure approach requires more upfront investment. It also produces compounding returns: once established, citation patterns stabilize, and early citation dominance is self-reinforcing.

What Content Characteristics Actually Drive AI Search Engine Citation Decisions?

The GEO research program at Princeton, combined with large-scale benchmarks from BrightEdge and Averi, produces a clear signal on what AI systems reward. These are empirically tested factors with measurable impact on citation visibility.

Structure and Extractability Requirements

AI retrieval systems parse content to identify extractable answer blocks — short, direct responses to specific questions that can be grounded in a generated answer and attributed to a source URL.

- FAQ and Q&A blocks enable AI systems to lift precise answers and tie them to a URL

- Short answer summaries (40-60 words) under each heading mirror the AI Overview structure directly

- Bullet lists and comparison tables are easier for retrieval-augmented systems to parse than narrative prose

- Clean heading hierarchy signals content organization to both traditional crawlers and AI retrieval systems

Google’s own documentation on AI Overviews identifies FAQ schema, answer cards under headings, and bullet lists as formatting traits that improve inclusion odds.

Data Density and Statistical Backing

Statistics addition improved AI visibility by 41%, quotation addition by 28%, and citing external sources improved visibility by 115% for lower-ranked content. These aren’t marginal gains — they’re structural advantages available to any content operation willing to verify and embed research at the production level.

- Specific statistics outperform general claims in AI citation selection

- External citations within content increase citation probability for lower-authority domains dramatically

- Numeric claims attributed to credible sources build AI confidence in a page’s reliability

- Adding more words without adding data shows no measurable visibility benefit

Authority Signals vs. Promotional Tone

Brand mentions correlate 0.664 with AI citation probability, compared to 0.218 for backlinks — a 3x difference that changes the optimization calculus for operators focused on AI search visibility.

- Brand mention consistency across your content portfolio builds entity authority

- Neutral, informational tone correlates positively with citation selection

- Overtly promotional copy correlates negatively — regardless of domain authority

- Authorship clarity and organizational credibility signals reinforce citation probability

Content written for conversion fails the AI citation filter, while content written for an information authority passes.

If your operation needs to produce 20-50+ articles per month that earn AI citations without sacrificing compliance or quality, Content Ops Lab builds the infrastructure to make that possible. Contact us today to discuss your content production requirements.

How Does Each AI Platform Select and Display Sources Differently?

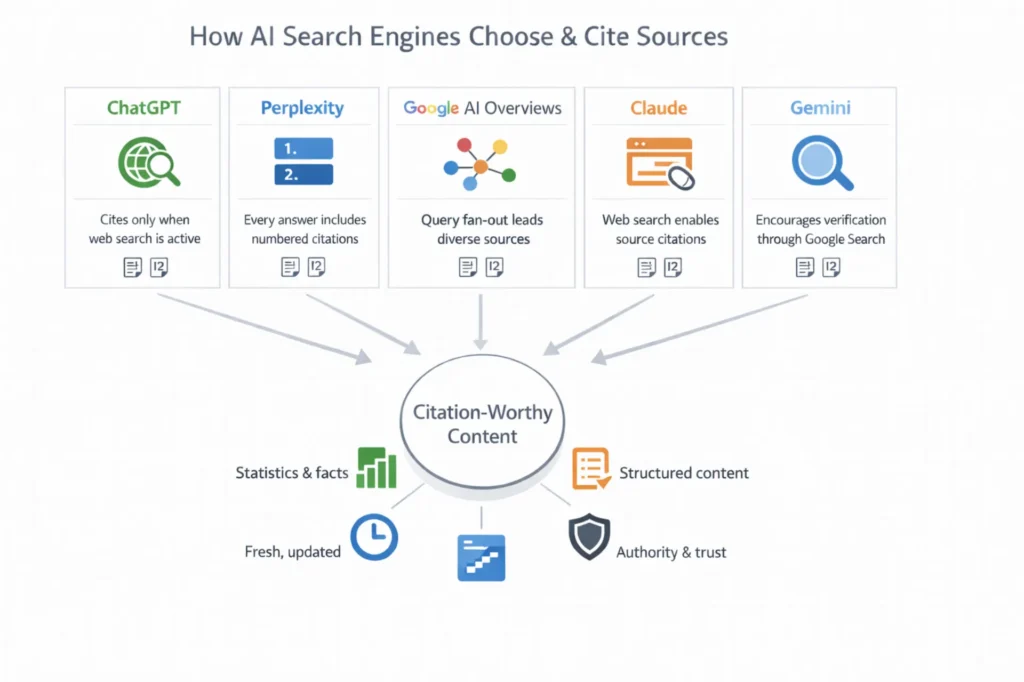

ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude share the same broad retrieval-and-generation pipeline but differ in their selection logic, citation presentation, and indexing dependencies.

ChatGPT and Perplexity Citation Mechanics

ChatGPT Search rewrites user queries into multiple targeted sub-queries, sends them to partner search providers, and may issue additional specific queries before generating an answer. Without a web search active, fabricated attributions are a documented risk.

- Citations appear only when the Search tool is active or automatically triggered

- Prefers pages matching inferred intent — tutorial, guide, examples — in titles and headings

- Relies on approximately the top 20 organic results from the underlying search provider

Perplexity operates as a citation-forward engine by default, combining semantic relevance, source authority, cross-domain corroboration, and recency.

- Numbered citations map to specific claims, often one per sentence

- Corroboration across multiple domains increases selection probability

- Real-time web search means that freshness signals are weighted heavily

Google AI Overviews and Gemini Behavior

Google AI Overviews run on a customized Gemini model built on top of the standard Google Search index. Query fan-out lets AI Mode issue multiple sub-searches simultaneously.

- Traditional ranking signals remain influential — 54.5% overlap with organic top results

- Schema markup (FAQ, HowTo, LocalBusiness) improves inclusion odds measurably

- Structured answer blocks and tables are specifically identified as favorable formatting

Gemini’s double-check feature highlights statements based on Google Search verification — but those highlighted links are not necessarily the pages used to generate the original answer.

Claude Source Selection and Brave Index Alignment

Independent analysis shows Claude’s citations align with Brave Search’s organic results in approximately 86.7% of cases.

- Ranking in Brave Search is a primary lever for Claude citation probability

- Allowing Claude’s crawlers via robots.txt is a prerequisite for source consideration

- Clean, well-structured content matching natural-language queries outperforms keyword-optimized pages

Brave visibility matters for Claude. Bing visibility matters for ChatGPT. Google indexing matters for AI Overviews and Gemini. Platform-specific awareness is required for full citation coverage.

Related: Answer Engine Optimization – What Multi-Location Operators Need to Know

What Does Optimizing for AI Citations Actually Deliver in Production?

Production data is unambiguous. AI search traffic represents a fundamentally different quality of visitor that converts at rates traditional organic traffic can’t match.

AI Search Conversion Rates vs. Organic Baselines

Content Ops Lab built and operated its citation-first methodology inside a 12-location regulated healthcare organization over 23 months. Over an 8-month measurement window (July 2025 – February 2026), AI search platforms generated 95+ confirmed conversions from 537+ sessions — a 21.4% average conversion rate against a 3.32% site baseline. That’s a 6.4x performance multiplier from less than 0.3% of total traffic.

- ChatGPT sessions grew 887% in 7 months (8 sessions → 79 sessions)

- Peak ChatGPT CVR reached 40% in January 2026, with 52 sessions

- CVR trajectory: 9.5% (August 2025) → 32.8% (December 2025) → 40% (January 2026)

- Perplexity delivered 25.7% CVR during the peak measurement period

AI search converts at 6x the site average because users asking an AI system for a service recommendation have already moved past the awareness stage. The AI platform filters options, evaluates authority, and refers a pre-qualified visitor before they ever land on your site.

Brand Mention Correlation with Citation Probability

Brand mentions correlate 0.664 with AI citation probability versus 0.218 for backlinks. Citation-first content strategy and brand authority strategy are the same program. Every article that earns a citation reinforces brand-entity signals, increasing the probability of future citations.

- Brand consistency across 100+ pages outperforms a single high-authority backlink

- Topic cluster depth strengthens entity associations with specific subjects

- NAP consistency across location pages contributes to organizational entity signals

BrightEdge tracking confirms the compounding dynamic: 96.8% of cited domains see zero change in citation share week over week. When citation share changes, it tends to drop suddenly — making early establishment more valuable than incremental optimization.

The First-Mover Compounding Effect on How AI Search Engines Decide Which Sources to Cite

The citation economy rewards early entrants. AI systems reinforce existing citation patterns because content that earns citations is structured, data-rich, and authoritative — characteristics that compound over consistent production.

- Fewer than 5% of multi-location healthcare practices currently optimize for AI citation

- Fewer than 10% of legal firms track AI referral traffic

- Competition for AI citations remains limited in home services and most regional markets

- The awareness phase is beginning — the competitive window is measured in quarters, not years

How Do You Build a Content Operation That Gets Consistently Cited?

The research on AI citation behavior translates directly into operational priorities — infrastructure decisions that determine whether your content program produces compounding citation authority or unstable, one-off appearances.

Citation Tracking as a Primary KPI

AI search traffic appears fragmented across GA4 referral classifications — ChatGPT sessions may register as direct, Perplexity as an obscure referral source, and AI Overviews as organic. Without dedicated attribution, most operators are systematically undercounting AI citation performance.

- Instrument GA4 to identify and segment AI platform referral traffic

- Track CVR by AI platform — Perplexity and ChatGPT often show distinct conversion behavior

- Recognize that citation stability is binary: domains hold citation share or drop suddenly

Content Architecture for Multi-Platform Extraction

Every major AI search platform rewards the same fundamental content architecture. Platform differences are in the indexing backend and citation presentation—not in what makes content structurally citation-worthy.

- Answer-first structure: 40-60-word direct responses under every H2 and H3

- Question-based headings that mirror conversational search queries

- Bullet-heavy content (40-60% of article body) for easy AI parsing

- Data density: statistics, external citations, and verified claims throughout

- FAQ blocks with explicit question-answer pairs for schema extraction

Operational Priorities for Scaled Programs

- Focus GEO efforts on pages that already rank or nearly rank and can be enriched with statistics and structured layouts

- Implement editorial processes to refresh data and update studies — freshness is a recurring selection signal

- Build topic clusters with consistent entities — AI systems associate domains with topics based on cluster coherence, not individual article performance

- Verify every statistic before publication — fabricated statistics in regulated industries create compliance exposure that outweighs any citation benefit

How Content Ops Lab Builds Content Infrastructure That Gets Cited

Our client, a 12-location regulated healthcare organization, generated 95+ confirmed AI search conversions at an average CVR of 21.4% over 8 months — from less than 0.3% of total traffic. That performance came from citation-first infrastructure, not retrofitted traditional SEO.

- 23-month production test inside a regulated healthcare organization with strict compliance requirements

- 1,000+ citation-verified articles and pages delivered with zero compliance violations

- 45% of all leads from organic search — outperforming paid search nearly 2:1

- AI search converting at 21.4% average vs. 3.32% site baseline — 6.4x performance multiplier

- 887% ChatGPT traffic growth in 7 months (July 2025 – February 2026)

- 653% impression growth and 1,700% click growth for an emerging brand in 14 months

- 5x production scale: 10 articles/month to 50+ without adding headcount

- Dual-brand methodology: proven on both established brand maintenance and emerging brand growth

The Content Ops Lab Production System

- Research: Verified sources from credible publications before any AI generation begins — no writing from memory, no hallucinated citations

- Verification: Line-by-line citation cross-check with STAT vs. CLAIM labeling and a full audit trail for every data point

- Optimization: Multi-platform formatting for Google, ChatGPT, Perplexity, Claude, and Gemini simultaneously

- Delivery: WordPress staging or Google Docs packages — publish-ready, Grammarly-reviewed, compliance-verified

The verification infrastructure is what separates citation-worthy content from content that merely claims to be citation-worthy.

Ready to build a content infrastructure that earns consistent AI citations without compliance risk? Get in touch today — we’ll assess your current content operation and outline what a systematic approach would look like for your organization.

FAQs About How AI Search Engines Decide Which Sources to Cite

How long does it take for content to start appearing in AI search citations when optimizing for how AI search engines decide which sources to cite?

Citation timelines vary by platform and domain authority. Perplexity and ChatGPT Search can surface new content within days if it’s structured for extraction. Google AI Overviews follow traditional indexing timelines — weeks to months. Citation share stabilizes quickly once established, making consistent early production more valuable than sporadic optimization.

What’s the difference between ranking in Google and being cited — and why does it matter for how AI search engines decide which sources to cite?

Google ranks pages for a list of links. AI citation selects source pages to ground a synthesized answer. Over half of AI Overview citations come from pages that rank organically — but nearly half do not. A top-five ranking page formatted for human readers may never be cited. A lower-ranking but data-rich, well-structured page can earn citations that a thin top-ranking page cannot.

How does citation verification protect against AI hallucination risk in regulated industries?

AI systems writing from memory produce fabricated statistics and hallucinated URLs. In regulated industries, a single unverified statistic can cascade across dozens of location pages simultaneously. Citation verification cross-checks every data point against source research before publication, producing an audit trail that meets healthcare, legal, and financial content standards — and data-backed claims with attributed sources are a positive citation signal in their own right.

Can a multi-location business track AI citations across ChatGPT, Perplexity, and Gemini simultaneously?

Yes, but it requires deliberate attribution infrastructure. AI platform traffic appears fragmented across GA4 classifications — sessions registering as direct, obscure referral sources, or organic. Dedicated UTM parameters and referral source segmentation are required for accurate attribution. Most operators are significantly undercounting AI citation performance because they haven’t instrumented for it.

Is Done-For-You or System Build better for organizations looking to scale AI citation performance?

Done-For-You is the faster path — Content Ops Lab runs the entire workflow, and your team reviews the final deliverables. System Build is right if you have an internal team capable of operating the infrastructure and want full ownership after implementation. Both deliver the same citation-first architecture and quality standards.

Key Takeaways

- AI search engines select sources based on retrievability, authority signals, data density, and content extractability — not traditional SEO ranking alone

- Query fan-out means a single user question triggers multiple sub-queries; content must be extractable across keyword variations, not just primary terms

- Data-rich content with external citations and statistics improves AI citation visibility by 40%+ — adding words without adding data shows no measurable benefit

- Brand mentions correlate 3x more strongly with AI citation probability than backlinks, making entity consistency across your content portfolio a primary strategic lever

- AI search traffic converts at 6x the site average when content is built for citation — users arriving from AI platforms are pre-qualified before they land

- Citation share stabilizes quickly once established and drops suddenly when lost — early citation infrastructure is significantly harder to displace than late-stage optimization

- Multi-platform coverage requires platform-specific indexing awareness: Brave for Claude, Google index for AI Overviews, and Gemini, structured reference-dense content for Perplexity and ChatGPT Search

Build Content Infrastructure That Compounds: How AI Search Engines Decide Which Sources to Cite

The citation economy is operating at full speed. AI search platforms are generating high-intent, high-converting referral traffic for organizations whose content meets the selection criteria — and filtering out everyone else, regardless of traditional SEO performance. GEO research confirms that structured, data-rich, verified content earns significantly more AI visibility than volume-optimized or promotional content.

The infrastructure decision you make now determines whether your organization compounds citation authority over the next 18 months or starts from zero when the competitive window closes.

Content Ops Lab built this methodology in production — 1,000+ verified articles, 95+ confirmed AI search conversions, zero compliance violations. The operators who act before mainstream adoption have the advantage. The operators who wait will find the citation positions already occupied.

Related: What Is Content Infrastructure for Multi-Location Brands?