Answer Engine Optimization: What Multi-Location Operators Need to Know

Answer engine optimization is the practice of structuring content so AI-powered platforms — ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews — can extract, trust, and cite it as a direct response to user questions. It’s not a replacement for traditional SEO. It’s what you layer on top to compete for the channel that converts at rates traditional organic never reached.

Across multiple AEO and AI‑traffic studies, companies report significantly higher conversion rates from AI‑sourced visitors—often 4–5× and, in some documented cases, over 20× higher than standard organic—alongside headline case studies claiming up to 300% growth in qualified leads within the first 3–4 months.

For multi-location operators, the structural demands of answer engine optimization aren’t optional add-ons — they’re the difference between appearing in AI-generated answers and being invisible to a growing share of high-intent buyers. Content Ops Lab built its AEO methodology within a regulated healthcare organization over 21 months, producing 1,000+ citation-verified articles that drove AI search conversion rates of 20-32%, compared with a 5.85% site baseline.

Why Is Your Content Invisible to AI Search Platforms Despite Strong Google Rankings?

Strong Google rankings and AI search citations are not the same thing. Content built purely for keyword rankings rarely meets the structural requirements AI systems use to identify trustworthy, extractable answers.

The Gap Between SEO Rankings and AI Citations

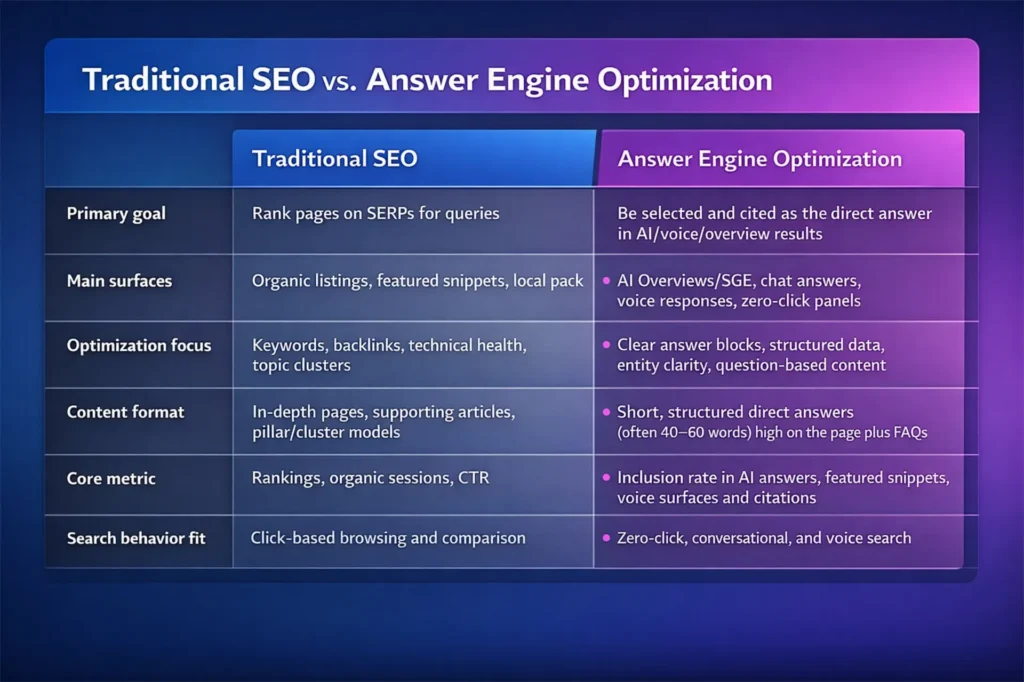

Traditional SEO optimizes pages to rank. Answer the question: which content should be extracted for SEO engineers? Those are structurally different goals requiring different content architecture.

- Ranking optimization targets keyword clusters and page authority

- Citation optimization targets modular answer blocks and E-E-A-T signals

- Dense paragraph content ranks fine; AI systems skip it for cleaner extractions

- Featured snippet performance predicts AI citation likelihood better than rank position

The unit of competition has shifted from “page targeting a keyword” to “answer block targeting a specific question.” If your content isn’t structured around discrete, self-contained answers, AI platforms move to sources that are — regardless of where your page sits in Google.

How AI Engines Select Sources

AI platforms don’t browse — they retrieve content from an index using layered quality filters.

- Topical relevance to the precise question, not just the general subject

- Structural clarity: H2/H3 hierarchies that mirror question phrasing

- Authority signals: authorship credentials, outbound citations, verified claims

- Answer-first formatting: direct response in the first 40-60 words of each section

Perplexity evaluates roughly 3-4 candidate sources per query and selects based on relevance, clarity, domain authority, and technical accessibility. Claude applies those criteria dynamically per query, not as a fixed formula. A page can rank well in Google while scoring below the threshold on every factor AI systems use to select citation sources.

Why Multi-Location Content Fails AI Extraction

Volume-driven content strategies — the kind that produce location pages at scale from templates — create exactly the structural conditions AI systems filter out.

- Templated location pages with near-identical content confuse AI about which location to recommend

- Inconsistent NAP data across locations signals unreliability to the AI selection logic

- Generic service descriptions without question-mirrored H2s produce no extractable answer blocks

- Thin pages lacking schema markup become invisible to the AI Overview synthesis

For a 10-location operation running templated content, this isn’t just one missed citation — it’s 10 locations that don’t appear when potential patients or clients ask AI systems for recommendations near them.

What Is Answer Engine Optimization and How Does It Differ from Traditional SEO?

Most multi-location operators are running a content strategy built for a search landscape that no longer exists. Google rankings don’t determine whether ChatGPT recommends your practice or Perplexity cites your content. This guide covers what AEO actually requires — and what multi-location operators specifically need to get right.

AEO vs. SEO at the Optimization Level

Traditional SEO optimizes pages for keyword clusters. Answer engine optimization optimizes discrete content blocks for specific questions.

- Traditional SEO success metric: rank position, organic clicks, CTR

- AEO success metric: citation rate in AI answers, AI share of voice, assisted conversions

- SEO content style: comprehensive guides, long-form topic hubs

- AEO content style: direct definitions, modular FAQs, question-specific answer blocks

- SEO update cadence: periodic large refreshes

- AEO update cadence: frequent, surgical updates to high-value answer blocks

The AEO, GEO, and LLM Optimization Landscape

Operators encounter overlapping terms in this space. They’re related but distinct.

- AEO (Answer Engine Optimization): Optimizing for cited answers within AI search surfaces — Google AI Overviews, ChatGPT with browsing, Perplexity, voice assistants

- GEO (Generative Engine Optimization): Structuring content so LLM-driven systems retrieve and present your information more prominently across any AI context

- LLM Optimization: Formatting and verification practices that make content trustworthy and extractable by large language models

Research published at KDD 2024 demonstrated that adding statistics, explicit citations, and quotations to content increases source visibility in generative responses by up to 40%. That’s a measurable citation lift, not a formatting preference.

What AI Platforms Are Actually Rewarding

The signals AI platforms use to select sources are consistent across platforms, even though weighting may differ.

- Answer-first formatting with 40-60-word direct responses at the top of each section

- Question-mirrored H2 and H3 headings that match how users actually phrase queries

- Statistical backing with credible, verifiable citations — not marketing claims

- 40-60% bullet-heavy content structure that enables clean AI parsing

- Confident, definitive language without hedging qualifiers

What AI systems filter out: keyword-stuffed articles, dense paragraph blocks, promotional language without data backing, and unsourced claims.

What Does AEO-Optimized Content Actually Look Like in Production?

Answer engine optimization has specific structural requirements at the article, section, and sentence level — and they don’t emerge naturally from standard content production processes. You have to engineer them in.

Answer-First Structure and Question-Mirroring H2s

AI systems select sources that provide a direct, extractable response in the first 40-60 words of a section — not sources that build to the answer through background context.

- Open every H2 section with a 40-60-word direct answer to the section question

- Use H2 headings that mirror natural language question phrasing, not keyword clusters

- Structure each section as: Answer → Supporting Detail → Evidence → Source

- Reserve longer explanatory content for subsections below the opening answer block

The “Question → Answer → Details → Sources” pattern isn’t optional for AI citation — it’s the filter that determines whether your content qualifies for extraction.

Modular Answer Blocks and Schema Requirements

Modular answer blocks are self-contained content chunks that cover a single claim with supporting evidence, structured so that AI can lift the block into a response without additional context. Sprawling 400-word paragraphs fail this test.

- Each answer block covers one claim, not a topic cluster

- Tight paragraphs: one idea, 2-4 sentences maximum

- FAQ, HowTo, Article, and LocalBusiness schema markup applied per page

- Clean, indexable URLs per main question — one question, one URL where possible

For multi-location operators, schema requirements extend to location pages: FAQPage and LocalBusiness markup per location, with a parent-child organization schema that helps AI understand the relationship between your brand and each branch.

Citation Signals That Build AI Trust

AI systems evaluate a source’s trustworthiness before selecting a citation. Most content production workflows don’t systematically build these signals.

- Authorship credentials and expertise signals are explicitly stated, not assumed

- Outbound citations to credible sources — not just inbound links

- Verified statistical claims with traceable sources

- Clear methods and definitions that demonstrate analytical rigor

- Last-modified dates and freshness indicators for evergreen pages

Claude evaluates source credibility by cross-referencing dates, author expertise, and content consistency before selection. Content that can’t demonstrate those signals on the page loses the citation competition to sources that can.

If your operation needs to produce 20-50+ articles per month optimized for AI search citations without sacrificing compliance or quality, Content Ops Lab builds the infrastructure to make that possible. Contact us online today to discuss your content production requirements.

How Are ChatGPT, Perplexity, Claude, and Gemini Selecting the Sources They Cite?

You’re not competing to be one of thousands of search results. You’re competing to be among a small handful of highly relevant, well-structured sources for each query. Between January and April 2025, ChatGPT’s share of total internet traffic doubled from 0.0793% to 0.1587%, with average referred sessions lasting nearly 10 minutes. Understanding each platform’s selection logic helps you determine where to focus your content architecture investment.

ChatGPT and Perplexity Citation Behavior

ChatGPT operates on two layers: baked-in training data and real-time web browsing, where citations appear. When browsing, it typically pulls from roughly the top 20 search results, applying filters for topical relevance, recency, and credibility.

- Source selection prioritizes precise, multi-keyword query matching

- Recency signals matter: “latest,” year strings, and freshness indicators improve selection odds

- Trust signals include established domains, clear methods, and transparent sourcing

- ChatGPT disproportionately cites encyclopedic and reference-style sources

Perplexity is explicitly built as an answer engine, always showing citations from real-time web search and retrieval-augmented generation. It selects roughly 3-4 sources per query based on direct relevance, content clarity, domain authority, and technical accessibility — favoring pages with direct-answer openings and question-mirrored H2/H3 structure.

Claude and Gemini Source Selection Patterns

Claude evaluates topical relevance, source credibility, and presentation quality together — dynamically per query rather than using fixed ranking signals. The same article may be cited for one query but not a closely related one if the context fit is weaker. Content that surfaces consistently has clear structures, front-loaded insights, and explicit authorship. Claude frequently cites titles and publication names rather than just URLs.

Gemini sits atop Google’s index. AI Overviews break queries into multiple sub-questions using “query fan-out,” run parallel searches, then synthesize answers with prominent source links. Content with strong schema, entity markup, and clear question-answer sections is more likely to appear in AI summaries.

Cross-Platform Patterns That Operators Can Influence

- Answer-first formatting with 40-60-word direct responses improves extraction across all platforms

- Question-mirrored H2/H3 structure signals relevance to the specific query being asked

- Verified statistical citations and transparent sourcing build the trust signals that all platforms filter for

- Schema markup — FAQ, Article, LocalBusiness — improves AI parsing on every platform

Platforms reward content that looks like a structured, well-researched answer with evidence. Not content that looks like SEO output optimized for a keyword cluster.

What Conversion Performance Does AI Search Traffic Actually Deliver?

The strategic case for AEO isn’t citation volume — it’s what happens after the citation. A 2025 SE Ranking study found ChatGPT generated 77.97% of all AI referral visits globally, Perplexity 15.10%, and Gemini 6.40%. AI-referred traffic converts at rates that traditional organic rarely approaches.

AI Search Conversion Rates vs. Traditional Organic

AI-sourced traffic converts at 3-5x the rate of standard organic. Users who ask an AI system for a recommendation and click through have already completed a research and comparison process — they’re decision-stage buyers, not early-funnel browsers.

- A B2B SaaS deployment recorded 27% of AI-sourced visitors becoming sales-qualified leads

- Sessions from AI referrals ran 30% longer than traditional organic

- That deployment attributed $1.2M in closed-won revenue to AI sources over 120 days at 54% lower cost per acquisition than traditional search

- A B2B marketing agency saw AI traffic grow 43%, contributing an 83% lift in conversions and $340k in new pipeline within 90 days

Production Data from Regulated Industry Deployments

Healthcare is one of the most demanding AEO environments — compliance requirements, citation verification standards, and multi-location complexity apply simultaneously. Production data from that environment provides the clearest test of whether AEO methodology holds under real operational constraints.

- AI search converting at 20-32% in a regulated healthcare environment vs. a 5.85% site baseline — 3.4-5.6x better performance

- Less than 0.3% of total traffic is delivering 4.5% of organic conversions

- 287% ChatGPT traffic growth in a 6-month production window

- 45% of total leads from organic search, outperforming paid nearly 2:1, across a 12,487-lead, 6-month period

Why AI-Referred Users Convert at Higher Rates

- AI platforms filter options before referral, pre-qualifying leads for fit

- Users arrive having completed an extended research conversation about their problem

- Trust transfer from AI citations carries weight similar to a personal referral

- Extended session durations indicate users are confirming a decision, not beginning research

The buyer arriving from ChatGPT or Perplexity is structurally different from the buyer arriving from a Google organic click. AI citations shift the quality composition of your inbound pipeline.

Is Your Multi-Location Operation Structured to Win AI Citations — or Lose Them?

The structural demands of AEO don’t scale linearly with the number of locations — they compound. Weaknesses in traditional SEO that could be absorbed — inconsistent location data, templated thin pages, uneven reviews — now produce outright AI invisibility by location. For operators managing 5-20+ locations, AEO is not a single-page optimization problem. It’s a network architecture problem.

Multi-Location AEO Risks and Visibility Gaps

AI visibility gaps are location-specific and invisible to operators running standard rank-tracking dashboards.

- Poorly architected location pages compete against each other for the same queries, confusing AI about which branch to recommend

- A multi-location dental practice was excluded from AI answer boxes entirely because Google’s AI couldn’t parse parent-child location relationships, while better-structured competitors were cited

- Focusing only on average rankings hides location-specific AI invisibility

- AI Overviews appear above local packs; users acting on AI recommendations never scroll to organic results

Network-Level Advantages: Systematic Operations Can Capture

Scale is a liability under poor content architecture and an asset under systematic AEO. Every additional location adds to a network of trust signals that AI systems interpret as evidence of credibility.

- A well-structured network — clean parent organization schema, consistent NAP, geodata, same-as links — outperforms fragmented single-location competitors

- A multi-location dental practice that fixed entity markup and connected parent-child schema saw 347% more local impressions and 89% more search-driven calls within 90 days, plus first-time inclusion in AI answer boxes

When operations are already strong, AEO becomes the amplifier that lets AI systems recognize and reward that consistency network-wide.

How to Measure AI Visibility Across Your Footprint

Traditional rank tracking doesn’t capture AI citation performance. Operators missing this data are blind to the channel where conversion rates are highest, and competition is still lowest.

- Track AI Overviews presence and AI referral traffic broken down by location — not just site-wide averages

- Monitor how often each priority location is cited in ChatGPT, Perplexity, and Gemini for core service queries

- Track AI-driven calls and bookings as a distinct category from classic organic clicks

- Compare AI citation frequency against competitors in your core markets — this is your real AI share of voice

While 66% of physicians now use AI tools and 69% of legal pros leverage gen AI, structured optimization for AI citations remains rare—likely under 10% across both sectors—creating both a massive measurement gap and citation opportunity.

How Content Ops Lab Builds AEO Infrastructure for Multi-Location Businesses

The 21-month production test that validated Content Ops Lab’s methodology ran within a regulated healthcare organization with strict compliance requirements and a 5x increase in scale from 10 to 50+ articles per month. AI search conversion rates of 20-32% were achieved by building a content infrastructure that AI systems could trust and extract from.

- 21-month production test inside a regulated multi-location healthcare organization

- 1,000+ citation-verified articles delivered with zero compliance violations

- 45% of all leads from organic search — outperforming paid search nearly 2:1

- AI search converting at 20-32% vs. 5.85% site baseline

- 653% impression growth and 1,700% click growth for an emerging brand over 14 months

- 5x production scale without adding headcount

- 287% ChatGPT traffic growth in 6 months

- Methodology validated on both mature brand maintenance and emerging brand growth

The Content Ops Lab Production System

- Research: Content architecture built around verified question taxonomies and real prompt patterns — no AI writing from unverified sources

- Verification: Line-by-line citation cross-check, STAT vs. CLAIM labeling, E-E-A-T signals documented for AI trust evaluation

- Optimization: Answer-first formatting, modular answer blocks, schema markup, and question-mirrored H2/H3 structure applied simultaneously for Google, ChatGPT, Perplexity, Claude, and Gemini

- Delivery: Publish-ready content with FAQ and LocalBusiness schema, clean URL structures per question, and multi-platform optimization verified before publication

Ready to build content infrastructure that converts at AI search rates across your entire location footprint? [Get in touch] — we’ll assess your current content operation and outline what systematic AEO implementation would look like for your organization.

FAQs About Answer Engine Optimization

What’s the difference between answer engine optimization and traditional SEO?

Traditional SEO optimizes pages to rank — targeting keyword clusters, building page authority, driving organic clicks. AEO optimizes specific content blocks on those pages for AI platforms to extract and cite. SEO success is measured in rankings and traffic; AEO success is measured in citation rate, AI share of voice, and conversion quality. AEO builds on top of SEO, not against it.

How long does it take for AEO-optimized content to appear in AI citations?

Case studies show citations appearing within 30-90 days of publishing structured AEO content, with conversion impact emerging faster once citations begin. Full implementation — from knowledge documentation through production scaling — takes 3-6 months. The first-mover window is measured in quarters, not years, and early citation patterns compound as AI systems reinforce established sources.

How does Content Ops Lab verify that content meets AEO citation standards?

Source research is verified before AI generation begins. Citations are cross-checked, line by line, against source documents using STAT vs. CLAIM labeling. The final output is reviewed against AEO structural requirements — answer-first formatting, question-mirrored H2s, schema compatibility, and the presence of an E-E-A-T signal. This is the same protocol that produced 1,000+ articles with zero compliance violations in a regulated healthcare environment.

Is answer engine optimization relevant for healthcare and legal multi-location practices?

It’s especially relevant. Compliance requirements make unverified AI-generated citations a direct liability risk — and AI platforms weigh verified, credible sources more heavily in regulated topic areas. Less than 5% of healthcare practices and less than 10% of legal firms are currently optimizing for AI search. The first-mover window in these verticals is open now.

What content volume does a multi-location business need to compete for AI search citations?

A 5-location operation typically needs 20-30 articles per month to build meaningful AI citation presence. A 10-20 location operation producing 50+ per month can achieve citation dominance within 6-12 months of systematic implementation. The key constraint isn’t volume — it’s verified, structured volume. High-volume unverified output doesn’t produce citations.

Key Takeaways

- Answer engine optimization shifts the optimization target from ranking pages to engineering extractable answer blocks — a structurally different goal requiring different content architecture

- AI search traffic converts at 20-32% in production deployments versus 5-6% baseline — because AI platforms pre-qualify buyers before referral

- ChatGPT, Perplexity, Claude, and Gemini each use distinct selection logic, but all reward answer-first formatting, question-mirrored structure, verified citations, and schema markup

- Multi-location operators face compounding AEO risks: inconsistent NAP, templated pages, and poor location schema create AI visibility gaps that rank tracking doesn’t detect

- Less than 5% of healthcare practices and less than 10% of legal firms are currently optimizing for AI citations — the first-mover window is open

- Implementation takes 3-6 months; early citation patterns compound as AI systems reinforce established sources

Build Content Infrastructure That Compounds: Answer Engine Optimization

Answer engine optimization isn’t a tactic you apply to existing content — it’s an infrastructure decision about how content is built from the first sentence forward. AEO programs produce qualified leads at rates traditional SEO channels don’t approach, with AI-sourced visitors arriving further along the decision process and converting at multiples of standard organic rates. Less than 5% of your competitive set is optimizing for this channel.

AI citation patterns compound over time. Content Ops Lab builds the AEO infrastructure multi-location businesses need to compete for AI citations at scale without sacrificing compliance or quality.

Content Ops Lab runs AI-optimized content production for multi-location businesses — research, verification, and delivery handled end to end. Contact us today to discuss what systematic AEO looks like for your operation.