Content at Scale: Why Volume Without Verification Fails in AI Search

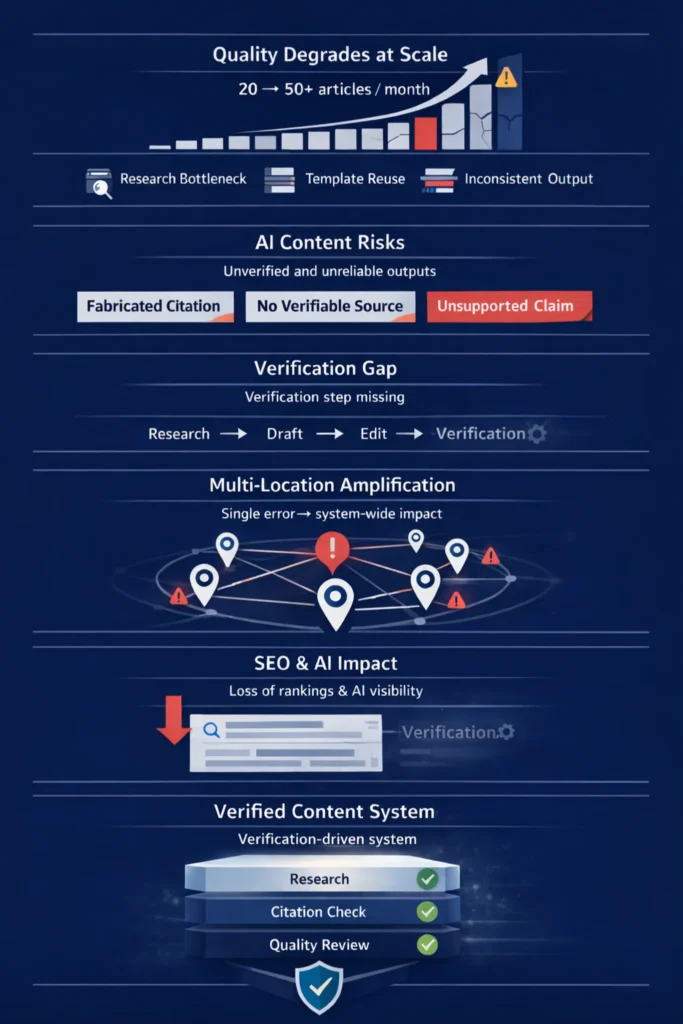

Scaling content at scale without verification infrastructure doesn’t just create compliance risk — it actively destroys the SEO performance the volume was meant to drive. Google’s Helpful Content system evaluates quality at the domain level, meaning that a high proportion of unverified, templated pages can drag down your entire site’s rankings.

Research cited in Google’s E-E-A-T guidance ties ranking authority directly to journalistic professionalism, established editorial policies, and robust review processes. For multi-location brands publishing 20-50+ articles per month, those processes either exist systematically, or they don’t exist at all.

Content Ops Lab built its verification infrastructure inside a regulated healthcare operation — 1,000+ citation-verified articles delivered over 23 months with zero compliance violations.

Related: What Is Content Infrastructure for Multi-Location Brands?

Why Does Content Quality Break Down When Multi-Location Brands Scale Production?

Quality degradation at scale isn’t a writing problem. It’s a structural problem — and it shows up in predictable ways once volume outpaces your research, governance, and editorial infrastructure.

Research Compression at Volume

At low publishing volume, strategists can vet sources, validate claims, and ensure each article adds substantive value. At 30-50 articles per month, the research phase becomes the primary bottleneck.

- Time allocated per article collapses

- Secondary sources replace primary research as the default

- AI generation fills the gap — producing content from training data, not verified sources

- Original data, examples, and context disappear from the output

The result is content that paraphrases existing pages without adding new insight — precisely what Google’s systems are built to identify and suppress.

Template Drift and Doorway Patterns

Multi-location brands commonly scale by deploying location-page templates across their entire footprint. The efficiency logic is sound. The SEO outcome often isn’t. Google’s doorway-page guidance is clear: templated pages that are “appreciably similar” across locations force search engines to struggle choosing which page to return — sometimes resulting in none of them ranking.

- Identical blog content with city-name swaps triggers doorway-page classification

- Internal keyword cannibalization dilutes authority across the network

- Crawl bloat prevents more important pages from being indexed efficiently

- Template governance gaps allow minimum quality thresholds to erode

The problem isn’t the template itself. It’s the absence of a system that enforces minimum thresholds for unique local insight, verified evidence, and location-specific depth per page.

Editorial Control Erosion Across Locations

As publishing velocity increases, editorial teams move from line-by-line review to spot checks. Google’s Helpful Content system operates at the site level — unhelpful content on some pages impacts the ranking of an entire domain. Quality failures don’t stay local. They accumulate into a domain-level signal that suppresses both your best and your worst content.

When content ownership is split between corporate and local operators, each location may adapt or publish independently, introducing inconsistent claims, a fragmented brand voice, and uneven compliance standards across the network.

What Happens When AI Content Generation Runs Without Verification?

AI tools accelerate content at scale. They don’t verify it. The distinction matters more at volume than at any other point — because the failure modes of AI generation compound directly with publishing velocity.

Hallucination Rates and Factual Confidence

Hallucinations aren’t rare edge cases — research categorizes them as a systemic property of current LLMs: outputs that appear fluent and coherent but are factually incorrect or entirely fabricated. Models express these errors with high confidence, making them difficult to catch without explicit verification steps.

- GPT-3.5 and GPT-4 fabricated 55% and 18% of citations, respectively, in literature review studies

- Newer reasoning models show higher hallucination rates than prior generations on some benchmarks

- Front-line teams and junior marketers are unlikely to question confident AI-generated copy under a deadline

- In regulated industries, a single fabricated statistic can trigger compliance exposure across the entire network

Citation Fabrication in Scaled Programs

A 2025 study on AI-generated citations found that nearly 1 in 5 citations generated by GPT-4o were entirely fabricated and that nearly two-thirds of all citations were either fabricated or contained errors. In a scaled content program, even a modest per-article fabrication rate compounds across dozens of outputs — especially when those citations get accepted at face value and reused in future campaigns or ad copy.

The downstream risk isn’t a bad article. It’s a systematic pattern of unverifiable claims propagated across every location page that inherits the same template or research source.

Model Drift Across Multiple Outputs

Even with standardized prompts and brand guidelines, LLM outputs vary in structure, emphasis, and factual choices across sessions. Two service pages for the same treatment, generated a month apart, may cite different statistics or make conflicting claims about outcomes. At the multi-location scale, this variability becomes content drift — inconsistent promises, risk disclosures, and clinical framing across the network.

Why Do Most Content Operations Miss the Verification Step in Content at Scale?

Verification isn’t missing because teams don’t care about accuracy. It’s missing because most content workflows were never designed to include it, and volume pressure reliably pushes it out when it does exist.

Editing vs. Verification — A Structural Distinction

Traditional editorial workflows focus on clarity, grammar, and brand voice. Verification is a different function: checking claims, numbers, timelines, and causal relationships against authoritative sources, then documenting decisions for auditability. That’s not what editors do when reviewing for readability. Without explicit infrastructure for it, it doesn’t happen.

- Auto-extracting factual claims is a separate step from editing

- Cross-verifying claims against independent sources requires time; most workflows don’t allocate

- Documenting citation provenance for compliance requires systems that most marketing teams don’t have

Why Verification Gets Skipped Under Volume Pressure

Thorough citation verification can take longer than initial drafting — especially in regulated domains. Teams publishing weekly per location treat verification as optional. The volume goal wins.

There’s also an automation bias problem. Because LLMs produce on-brand copy quickly, teams treat AI drafts as a baseline and assume human reviewers will catch anything important. Fabricated citations slip through even when reviewers are aware of the risk of hallucination, particularly on specialized topics.

What Structured QA Infrastructure Actually Requires

Best-practice frameworks describe “fact ledgers” — structured records listing claims, sources, and last-reviewed dates — alongside role matrices specifying who drafts, verifies, and approves each piece. Without this, organizations can’t audit what the model invented versus what was grounded in evidence.

For multi-location brands, the absence of shared verification infrastructure means each location or agency partner improvises its own standards. From Google’s perspective, the brand’s factual reliability appears uneven.

If your operation needs to produce 20-50+ articles per month without sacrificing compliance or quality, Content Ops Lab builds the infrastructure to make that possible. Contact us today to discuss your content production requirements.

How Does Unverified Content at Scale Damage SEO and AI Search Performance?

Volume without verification doesn’t create isolated bad pages. It creates a pattern that both traditional and AI-driven search systems are specifically designed to detect and penalize.

Sitewide Quality Signals and E-E-A-T Exposure

Google’s Helpful Content system evaluates quality at the domain level. A high proportion of thin or hallucinated pages degrades the domain’s overall ranking ability — not just the underperforming content. For YMYL industries — healthcare, legal, financial — the exposure is steeper.

Google’s Quality Rater Guidelines state that untrustworthy pages receive the lowest possible quality rating, no matter how Experienced, Expert, or Authoritative they may seem. A hallucinated claim about treatment efficacy or legal rights, reproduced across dozens of city pages, produces both regulatory scrutiny and a systemic ranking downgrade.

AI Overview, Source Filtering, and Citation Eligibility

Traditional rankings and AI citation eligibility are now separate competitions. Analysis of Google’s AI Overviews indicates AI source selection applies reliability filters beyond standard ranking signals. Google’s AI systems use verification checkpoints that check claims against sources such as the Knowledge Graph, academic repositories, and government data before deciding whether to cite a page.

- Content below the E-E-A-T threshold is filtered out before AI answer construction begins

- Pages with unverified or conflicting claims are less likely to survive these filters

- Even pages that rank on the traditional SERP may be skipped for AI citations

A page that ranks #3 organically but fails AI verification gets zero AI citation traffic. Winning both requires separate infrastructure for each.

Content Debt and Long-Term Domain Trust

Low-quality content creates content debt — a backlog of pages that must be rewritten, merged, or removed to restore domain trust. Once a site is classified as unhelpful, rankings can remain suppressed for months even after cleanup.

For multi-location brands publishing 40 articles per month without verification, hundreds of weak pages can accumulate in a single year. Remediating that while maintaining publishing velocity is an operational problem most teams aren’t equipped to solve.

Related: How AI Search Engines Decide Which Sources to Cite

What Does a Verification-First Content at Scale System Actually Require?

Verification at scale isn’t an added review step at the end of your current workflow. It requires redesigning production from the research phase forward.

Research-First Foundation Before AI Generation

Every article should begin with verified research from credible sources—not AI-generated from training data. A dedicated research phase using citation-tracked tools, with every source logged before drafting begins, eliminates hallucination risk at the source rather than trying to catch fabrications in editing.

- Perplexity Pro and similar tools return citations alongside content

- Client knowledge bases document proprietary expertise before AI generates around it

- Source libraries organized by topic prevent repeated verification work on recurring claims

Citation Cross-Check and Audit Trail Infrastructure

Verification isn’t complete until every claim is traced back to its source document — with line numbers, not just URLs. The standard for regulated industries requires word-for-word confirmation that the source says what the article claims it says.

- STAT vs. CLAIM labeling distinguishes data-backed assertions from sourced qualitative claims

- Line-number documentation creates an audit trail for every data point

- Exact quote extraction prevents the interpretation errors that paraphrasing introduces

Multi-Location Governance and Consistency Standards

Verification infrastructure has to operate at the network level. Without a unified framework, inconsistent processes, policy deviations, and fragmented risk controls emerge — introducing unforeseen risks even in processes that appear secure at the individual location level.

- A single unified production system ensures every location operates from the same standard

- Version control prevents content drift across updates and revisions

- NAP consistency verification cross-checks Name, Address, Phone across every location

Is Your Content at Scale Operation Built to Avoid Compliance and SEO Liability?

The question isn’t whether to scale content. It’s whether your current infrastructure can absorb the volume without producing the failure modes described above.

Indicators Your System Lacks Verification Infrastructure

Most multi-location brands have content programs. Few have content systems. The gaps show up in specific, identifiable ways:

- No dedicated research phase — drafts start from prompts, not verified sources

- No citation log or audit trail — no way to trace where any claim came from

- No site-level quality monitoring — you don’t know how many thin pages you’ve accumulated

- No AI search tracking — you’re measuring organic clicks but not AI referral conversions

The Cost of Retrofitting Verification After Scale

Building verification infrastructure after a content program is already at volume is significantly harder than building it before. You face a dual burden: maintaining publishing velocity while auditing and remediating existing content.

- Content debt remediation at 40+ articles/month of legacy output is a months-long project

- Hallucinated citations embedded in published content propagate into PPC copy, local listings, and franchise microsites

- Domain trust recovery after Helpful Content suppression takes months, even with correct remediation

Done-For-You vs. System Build for Multi-Location Verification

Content Ops Lab offers two implementation paths. Done-For-You delivers fully managed production — research, generation, citation verification, optimization, and staged delivery — without requiring your team to operate the system.

System Build delivers the complete production infrastructure trained into your team over 12 weeks, with 90 days of post-launch support. Both paths produce the same outcome: systematic content production with verification built in from the research phase forward.

How Content Ops Lab Builds Verification-First Content Infrastructure

A multi-location healthcare client scaling from 10 to 50+ articles per month needed more than production capacity — it needed a system that could maintain citation accuracy and compliance standards across a dual-brand, multi-state operation. Over 23 months, the Content Ops Lab methodology delivered 1,000+ citation-verified articles and pages with zero compliance violations.

- 23-month production test inside a regulated healthcare organization

- 1,000+ citation-verified articles and pages with zero compliance violations

- 45% of all leads from organic search — outperforming paid search nearly 2:1

- 21.4% average AI search CVR vs. 3.32% site average — 6.4x performance multiplier

- 887% ChatGPT traffic growth in 7 months (July 2025 – February 2026)

- 653% impression growth, 1,700% click growth for an emerging brand built from near-zero organic presence

- 5x production scale — 10 articles/month to 50+ without adding headcount

- 188 question-based keywords ranking, 83% in positions 1-10

The Content Ops Lab Production System

- Research: Verified sources before generation — Perplexity Pro workflow, no writing from training data memory

- Verification: Line-by-line citation cross-check with STAT vs. CLAIM labeling and full audit trail

- Optimization: Multi-platform formatting for Google, ChatGPT, Perplexity, Claude, and Gemini simultaneously

- Delivery: WordPress staging or Google Docs — publish-ready, compliance-reviewed, Grammarly-verified

Ready to build a content infrastructure that scales without the compliance risk? Get in touch — we’ll assess your current content operation and outline what a systematic approach would look like for your organization.

FAQs About Content at Scale for Multi-Location Brands

How does unverified AI content at scale affect search rankings across multiple locations?

Google’s Helpful Content system operates at the domain level — unverified, thin pages suppress rankings across your entire site, not just the weak content. For multi-location brands, a flawed claim in a master template propagates to every location page that inherits it. Rankings can remain suppressed for months even after cleanup.

What’s the difference between editing content and verifying it?

Editing addresses clarity, grammar, and brand voice. Verification traces every factual claim back to its source document, confirms the source says what the article claims, and documents that audit trail for compliance. Without explicit verification infrastructure, editors working under a deadline rarely reconstruct the provenance of each claim — even when they’re aware of the risk of AI hallucinations.

How does Content Ops Lab prevent citation hallucinations in regulated industries?

Production starts with verified research before AI generates anything. Every citation is extracted as an exact quote with line-number documentation. STAT vs. CLAIM labeling applies different verification standards to different evidence types. No paraphrasing — direct quotes prevent the interpretation errors that paraphrasing introduces. The result is an audit trail for every data point before publication.

What volume of content at scale does a multi-location brand actually need?

Most multi-location practices need 20-50+ articles per month to build meaningful organic share across their footprint. The more important variable is verification: 10 citation-verified, systematically optimized articles outperform 50 unverified AI-generated articles that accumulate content debt and suppress domain authority over time.

How long does it take to see SEO results from a verification-first content approach?

Meaningful organic growth typically appears in months 3-6. AI search citations can appear earlier — often within weeks of indexing — because AI systems prioritize verified claims and citation-ready formatting over domain authority alone. The compounding effect builds over 12-18 months, which is why the implementation window matters.

Key Takeaways

- Volume without verification produces content debt — thin, unverified pages that suppress domain authority across your entire site, not just the weak content

- Google’s Helpful Content system is domain-level: once a site accumulates a high proportion of low-quality pages, rankings can remain suppressed for months even after cleanup

- AI citation eligibility and traditional search ranking are now separate competitions — pages that rank organically can still be excluded from AI Overviews if they fail verification checkpoints

- A 2025 study found nearly two-thirds of AI-generated citations were fabricated or contained errors — at a multi-location scale, even modest hallucination rates create systemic compliance and SEO exposure

- The Content Ops Lab methodology delivered 21.4% average AI search CVR (6.4x site average) and 45% organic lead share across a 23-month production test in a regulated industry — with zero compliance violations

- Verification infrastructure is significantly cheaper to build before scaling than to retrofit after a quality-driven ranking decline

Why Content at Scale Requires Systems, Not Just Speed

The search landscape has changed the terms of content competition. Volume still matters — but it has to be verified volume. Google’s Helpful Content system, AI Overview source filtering, and E-E-A-T evaluation all apply quality gates that unverified content can’t pass. Nearly two-thirds of AI-generated citations are fabricated or contain errors — a failure rate that, at 40 articles per month across a multi-location network, creates compounding liability rather than compounding authority.

The brands that build first-mover advantage in AI search over the next 12-18 months will be the ones that treated verification as infrastructure, not an afterthought. Content Ops Lab builds content production systems where verification is built into every stage — not added at the end. The proof is 23 months of live production in a regulated industry: 1,000+ articles, zero compliance violations, and AI search converting at 6.4x the site baseline.

Related: Structured Content for AI Search – How It Gets You Cited by AI